Tag Archives: Deep Learning

LLMs & Semantic Search Course by Andrew NG, Cohere & Partners

Andrew Ng, a renowned name in the world of deep learning and AI, has joined forces with Cohere, a pioneer in natural language processing technologies. Alongside him are Jay Alammar, a well-known educator and visualizer of machine learning concepts, and Serrano Academy, an esteemed institution dedicated to AI research and education. Together, they have launched an insightful course titled “Large Language Models with Semantic Search.” This collaboration represents a fusion of expertise aimed at addressing the growing needs of semantic search in various applications. In an era where keyword search has dominated the search landscape, the need for more sophisticated, content-aware search capabilities is becoming increasingly evident. Content-rich platforms like …

Quiz: BERT & GPT Transformer Models Q&A

Are you fascinated by the world of natural language processing and the cutting-edge generative AI models that have revolutionized the way machines understand human language? Two such large language models (LLMs), BERT and GPT, stand as pillars in the field, each with unique architectures and capabilities. But how well do you know these models? In this quiz blog, we will challenge your knowledge and understanding of these two groundbreaking technologies. Before you dive into the quiz, let’s explore an overview of BERT and GPT. BERT (Bidirectional Encoder Representations from Transformers) BERT is known for its bidirectional processing of text, allowing it to capture context from both sides of a word …

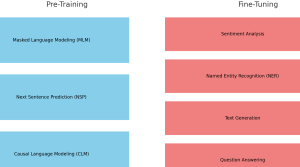

Pre-training vs Fine-tuning in LLM: Examples

Are you intrigued by the inner workings of large language models (LLMs) like BERT and GPT series models? Ever wondered how these models manage to understand human language with such precision? What are the critical stages that transform them from simple neural networks into powerful tools capable of text prediction, sentiment analysis, and more? The answer lies in two vital phases: pre-training and fine-tuning. These stages not only make language models adaptable to various tasks but also bring them closer to understanding language the way humans do. In this blog, we’ll dive into the fascinating journey of pre-training and fine-tuning in LLMs, complete with real-world examples. Whether you are a …

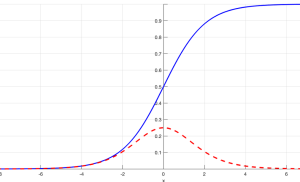

Vanishing Gradient Problem in Deep Learning: Examples

Ever found yourself wondering why your deep learning (deep neural network) model is simply refusing to learn? Or struggled to comprehend why your deep neural network isn’t reaching the accuracy you expected? The culprit behind these issues might very well be the infamous vanishing gradient problem, a common hurdle in the field of deep learning. Understanding and mitigating the vanishing gradient problem is a must-have skill in any data scientist‘s arsenal. This is due to the profound impact it can have on the training and performance of deep neural networks. In this blog post, we will delve into the heart of this issue, learning the calculus behind neural networks and …

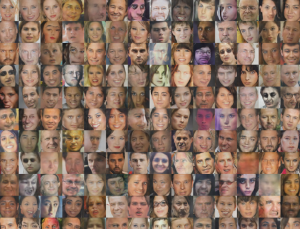

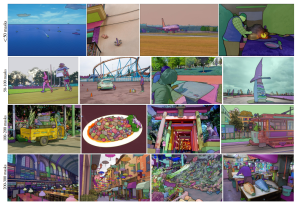

DCGAN Architecture Concepts, Real-world Examples

Have you ever wondered how AI can create lifelike images that are virtually indistinguishable from reality? Well, there is a neural network architecture, Deep Convolutional Generative Adversarial Network (DCGAN) that has revolutionized image generation, from medical imaging to video game design. DCGAN’s ability to create high-resolution, visually stunning images has brought it into great usage across numerous real-world applications. From enhancing data augmentation in medical imaging to inspiring artists with novel artworks, DCGAN‘s impact transcends traditional machine learning boundaries. In this blog, we will delve into the fundamental concepts behind the DCGAN architecture, exploring its key components and the ingenious interplay between its generator and discriminator networks. Together, these components …

Generative Adversarial Network (GAN): Concepts, Examples

In this post, you will learn concepts & examples of generative adversarial network (GAN). The idea is to put together key concepts & some of the interesting examples from across the industry to get a perspective on what problems can be solved using GAN. As a data scientist or machine learning engineer, it would be imperative upon us to understand the GAN concepts in a great manner to apply the same to solve real-world problems. This is where GAN network examples will prove to be helpful. What is Generative Adversarial Network (GAN)? We will try and understand the concepts of GAN with the help of a real-life example. Imagine that …

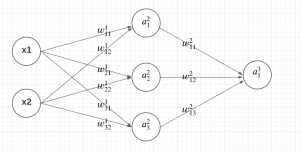

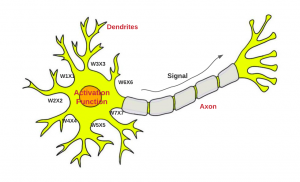

Backpropagation Algorithm in Neural Network: Examples

Artificial Neural Networks (ANN) are a powerful machine learning / deep learning technique inspired by the workings of the human brain. Neural networks comprise multiple interconnected nodes or neurons that process and transmit information. They are widely used in various fields such as finance, healthcare, and image processing. One of the most critical components of an ANN is the backpropagation algorithm. Backpropagation algorithm is a supervised learning technique used to adjust the weights of a Neural Network to minimize the difference between the predicted output and the actual output. In this post, you will learn about the concepts of backpropagation algorithm used in training neural network models, along with Python …

Meta Unveils SAM and Massive SA-1B Dataset to Advance Computer Vision Research

Meta Researchers have, yesterday, unveiled a groundbreaking new model, namely Segment Anything Model (SAM), alongside an immense dataset, the Segment Anything Dataset (SA-1B), which together promise to revolutionize the field of computer vision. SAM’s unique architecture and design make it efficient and effective, while the SA-1B dataset provides a powerful resource to fuel future research and applications. The Segment Anything Model is an innovative approach to promptable segmentation that combines an image encoder, a flexible prompt encoder, and a fast mask decoder. Its design allows for real-time, interactive prompting in a web browser on a CPU, opening up new possibilities for computer vision applications. One of the key challenges SAM …

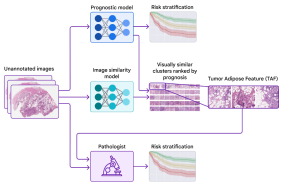

Machine Learning: Identify New Features for Disease Diagnosis

When diagnosing diseases that require X-rays and image-based scans, such as cancer, one of the most important steps is analyzing the images to determine the disease stage and to characterize the affected area. This information is central to understanding clinical prognosis and for determining the most appropriate treatment. Developing machine learning (ML) / deep learning (DL) based solutions to assist with the image analysis represents a compelling research area with many potential applications. Traditional modeling techniques have shown that deep learning models can accurately identify and classify diseases in X-rays and image-based scans and can even predict patient prognosis using known features, such as the size or shape of the …

Transposed Convolution vs Convolution Layer: Examples

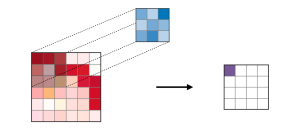

In the field of computer vision and deep learning, convolutional neural networks (CNNs) are widely used for image recognition tasks. A fundamental building block of CNNs is the convolutional layer, which extracts features from the input image by convolving it with a set of learnable filters. However, another type of layer called transposed convolution, also known as deconvolution, has gained popularity in recent years. In this blog post, we will compare and contrast these two types of layers, provide examples of their usage, and discuss their strengths and weaknesses. What are Convolutional Layer? What’s their purpose? A convolutional layer is a fundamental building block of a convolutional neural network (CNN). …

CNN Basic Architecture for Classification & Segmentation

As data scientists, we are constantly exploring new techniques and algorithms to improve the accuracy and efficiency of our models. When it comes to image-related problems, convolutional neural networks (CNNs) are an essential tool in our arsenal. CNNs have proven to be highly effective for tasks such as image classification and segmentation, and have even been used in cutting-edge applications such as self-driving cars and medical imaging. Convolutional neural networks (CNNs) are deep neural networks that have the capability to classify and segment images. CNNs can be trained using supervised or unsupervised machine learning methods, depending on what you want them to do. CNN architectures for classification and segmentation include …

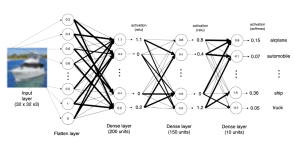

Keras: Multilayer Perceptron (MLP) Example

Artificial Neural Networks (ANN) have emerged as a powerful tool in machine learning, and Multilayer Perceptron (MLP) is a popular type of ANN that is widely used in various domains such as image recognition, natural language processing, and predictive analytics. Keras is a high-level API that makes it easy to build and train neural networks, including MLPs. In this blog, we will dive into the world of MLPs and explore how to build and train an MLP model using Keras. We will build a simple MLP model using Keras and train it on a dataset. We will explain different aspects of training MLP model using Keras. By the end of …

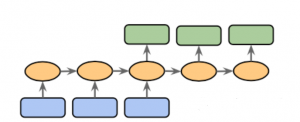

Sequence to Sequence Models: Types, Examples

Sequence to sequence (Seq2Seq) modeling is a powerful machine learning technique that has revolutionized the way we do natural language processing (NLP). It allows us to process input sequences of varying lengths and produce output sequences of varying lengths, making it particularly useful for tasks such as language translation, speech recognition, and chatbot development. Sequence to sequence modeling also provides a great foundation for creating text summarizers, question answering systems, sentiment analysis systems, and more. With its wide range of applications, learning about sequence to sequence modeling concepts is essential for anyone who wants to work in the field of natural language processing. This blog post will discuss types of …

Perceptron Explained using Python Example

In this post, you will learn about the concepts of Perceptron with the help of Python example. It is very important for data scientists to understand the concepts related to Perceptron as a good understanding lays the foundation of learning advanced concepts of neural networks including deep neural networks (deep learning). What is Perceptron? Perceptron is a machine learning algorithm which mimics how a neuron in the brain works. It is also called as single layer neural network consisting of a single neuron. The output of this neural network is decided based on the outcome of just one activation function associated with the single neuron. In perceptron, the forward propagation of information happens. Deep …

85+ Free Online Books, Courses – Machine Learning & Data Science

This post represents a comprehensive list of 85+ free books/ebooks and courses on machine learning, deep learning, data science, optimization, etc which are available online for self-paced learning. This would be very helpful for data scientists starting to learn or gain expertise in the field of machine learning / deep learning. Please feel free to comment/suggest if I missed mentioning one or more important books that you like and would like to share. Also, sorry for the typos. Following are the key areas under which books are categorized: Data science Pattern Recognition & Machine Learning Probability & Statistics Neural Networks & Deep Learning Optimization Data mining Mathematics Here is my post …

Tensor Broadcasting Explained with Examples

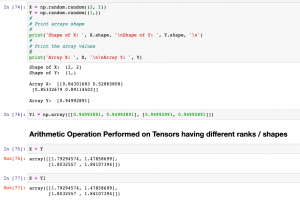

In this post, you will learn about the concepts of Tensor Broadcasting with the help of Python Numpy examples. Recall that Tensor is defined as the container of data (primarily numerical) most fundamental data structure used in Keras and Tensorflow. You may want to check out a related article on Tensor – Tensor explained with Python Numpy examples. Broadcasting of tensor is borrowed from Numpy broadcasting. Broadcasting is a technique used for performing arithmetic operations between Numpy arrays / Tensors having different shapes. In this technique, the following is done: As a first step, expand one or both arrays by copying elements appropriately so that after this transformation, the two tensors have the …

I found it very helpful. However the differences are not too understandable for me