When diagnosing diseases that require X-rays and image-based scans, such as cancer, one of the most important steps is analyzing the images to determine the disease stage and to characterize the affected area. This information is central to understanding clinical prognosis and for determining the most appropriate treatment. Developing machine learning (ML) / deep learning (DL) based solutions to assist with the image analysis represents a compelling research area with many potential applications.

Traditional modeling techniques have shown that deep learning models can accurately identify and classify diseases in X-rays and image-based scans and can even predict patient prognosis using known features, such as the size or shape of the affected area. While these efforts focus on using machine learning models to detect or quantify known features, alternative approaches offer the potential to identify novel features. The discovery of new features could in turn further improve disease prognosis and treatment decisions for patients by extracting information that isn’t yet considered in current workflows.

In this blog, we will discuss how deep learning techniques can be used to identify novel features for disease diagnosis based on X-rays and image based scans. This method has been validated by Google in conjunction with teams at the Medical University of Graz in Austria and the University of Milano-Bicocca (UNIMIB) in Italy.

Training a Deep Learning Model to Predict Patient Outcomes

The deep learning approach to predict the patient outcome is to train deep learning models using image based scans and predict patient outcomes using only the images and the labeled outcome data. You may recall that when training with deep learning models, you don’t need to specify the features to use. This approach is in contrast to training models to predict intermediate human-annotated labels for known pathologic features (human identified features) and then using those features to predict outcomes.

One real-world example of the deep learning approach to predict patient outcome is in the field of radiology. Radiologists use image-based scans, such as X-rays, CT scans, and MRIs, to diagnose a wide range of diseases and conditions. These images contain a wealth of information that can be difficult for a human radiologist to interpret accurately and quickly. Deep learning models have shown promise in assisting radiologists in the diagnosis and prediction of patient outcomes.

For example, a deep learning model can be trained to predict the likelihood of a lung cancer diagnosis based on a chest X-ray image. The model can learn to identify subtle patterns in the image that are indicative of cancer and can use this information to make predictions about the patient’s outcome. The model doesn’t need to be told what features to look for in the image; it can learn on its own through a process of trial and error.

Interpreting the Model-Learned Features to Identify New Features

In the previous section, we trained a deep learning model (can be called as the prognostic model) to predict the diagnosis of a disease using image scans. Now, the ask is to identify new features used by the model for prediction. Once we learn about these new features, it can be used by the practitioners in the day-to-dat disease diagnosis.

To achieve this, we can employ a second clustering model, which can be trained to cluster cropped patches of the large image scans and identify image similarity of cropped images. These cluster of images can then be passed through the earlier trained prognostic model which can predict the average risk score for each cluster of images. One of these clusters can be found with a significantly high average risk score and distinct visual appearance. This can help us identify a new feature. In the research done by Google, it was found that the quality of this feature can be highly prognostic. These can be used by healthcare practitioners for future diagnosis.

For example, suppose we trained a deep learning model to predict whether a given image of a plant shows signs of a particular disease. To identify new features used by the model for prediction, we employed a second model to cluster cropped patches of the plant images. We then used the first model to calculate the average risk score for each cluster. One cluster had a significantly high average risk score and distinct visual appearance, leading us to identify a new feature.

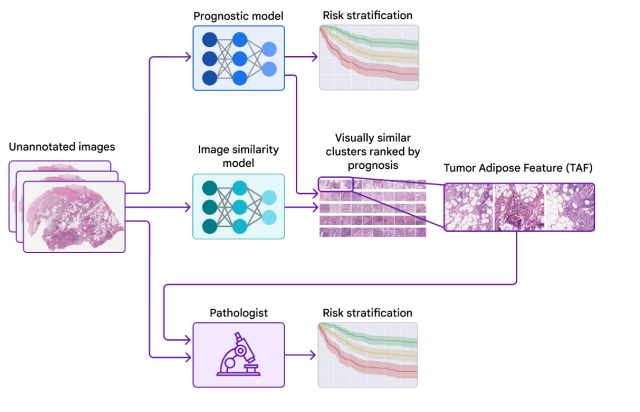

The picture below is taken from Google blog which represents the workflow including two different models.

The following is the explanation for the above image:

- Prognostic model, a deep learning model, is trained on unannotated image scans to predict patient outcome – whether a patient is suffering from a disease.

- The cropped images from image scans are also passed through the clustering model to find the clusters of similar cropped images

- The cluster of these cropped image is passed through the prognostic model. The model predict the risk score in terms of patient outcome.

- The cluster with highest risk score becomes the new feature which can be used by practitioners in future disease diagnosis.

Validating that the Model-Learned Features can be used by Healthcare Practitioners

The Google team collaborated with healthcare practitioners to investigate whether they could learn and use the feature identified by the model while maintaining demonstrable prognostic value. Using example images from the previous publication to learn and understand this feature of interest, the practitioners developed scoring guidelines for the feature. The study showed that practitioners could reproducibly identify the ML-derived feature and that their scoring for the feature provided statistically significant prognostic value on an independent retrospective dataset. This is the first demonstration of healthcare practitioners learning to identify and score a specific disease feature originally identified by an ML-based approach.

Conclusion

The deep learning technique of using two models can identify novel features that can be used by healthcare practitioners for disease diagnosis using X-rays and image-based scans. The approach involves training a model to predict patient outcomes from the images without specifying the features to use, probing that model using explainability techniques, and identifying a novel feature and validating its association with patient prognosis. The identification of the novel feature demonstrates the potential for ML models to predict patient outcomes and for healthcare practitioners to learn new prognostic features from machine learning.

As we continue to develop machine learning tools for disease diagnosis, it’s important to consider the potential benefits of identifying novel features. These features could lead to more accurate diagnoses and prognoses, and ultimately better treatment decisions for patients. Healthcare practitioners should consider incorporating machine learning tools into their workflows to improve patient outcomes and advance the field of medicine.

However, it’s important to note that these tools should be used as an aid to, not a replacement for, healthcare practitioners’ expertise and judgement. The interpretation of ML results should always be made by a healthcare practitioner, taking into account the individual patient’s medical history and other relevant information.

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me