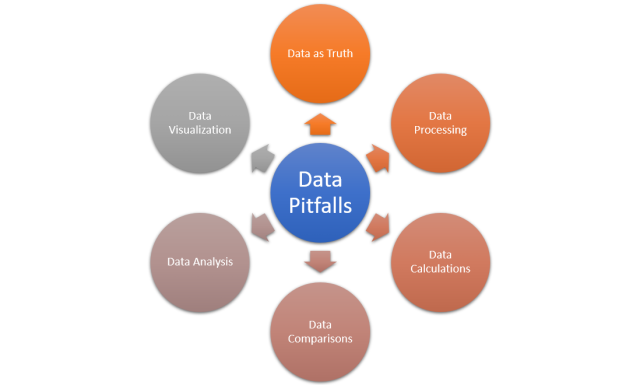

Working with data can be a powerful tool, but there are some common pitfalls that a data professionals including data analysts & data scientists should always be aware of when gathering, storing, and analyzing data. Good data is essential for any successful analytics project, and understanding the most common data pitfalls will help you avoid them. In this blog, we will take a look at what these mistakes are and how to avoid them. The picture below represents the most common data pitfalls to avoid.

Considering Data as the Truth

One major data pitfall is when people consider data as absolute truth (reflection of reality) without taking any other factors into consideration. Based on this pitfall, one may end up using data to verify a previously held belief rather than to test it to see whether it’s actually false. These issues can lead to various consequences, from misleading decision making to inaccurate outcomes.

The lack of context around the data can produce false assumptions or bias in decision-making processes. For example, if you rely solely on quantitative data to make decisions, it could be overlooking qualitative insights that may provide a more holistic understanding of the situation. Similarly, incorrect and inaccurate information may be derived from using only current data points without considering any historical trends or other external conditions, resulting in inaccurate conclusions and wasted resources.

Data should never be viewed in isolation but rather contextualized with other pieces of evidence and factored into meaningful analyses. You need to ensure that all available information are considered before reaching a conclusion or deciding on a direction of action. You must also take into account any biases or inconsistencies in the data before relying on it for decision making purposes. You should choose reliable data sources to ensure data accuracy with independent auditing whenever feasible.

Data processing

Data processing can be a source of many potential pitfalls when it comes to data analysis. One of the primary issues is dirty data, which refers to incomplete or incorrect information that is either missing, duplicate, inaccurate, or inconsistent. This type of problem can occur when different sources are used for similar items like categories with mismatching levels and typos in data entry. Furthermore, units of measurements and date fields that don’t match from one dataset to another can make it difficult to accurately assess the data in question.

Another potential pitfall occurs when multiple datasets are brought together. This can often result in null values or duplicated rows that skew the accuracy of analytics. Null values represent an absence of information; where as duplicated rows create an anomaly in the data by providing skewed results due to double counting. Without careful attention these problems may go unnoticed leading to misrepresentations in the results at best and total failure at worst.

Deriving insights from Data / Data Calculations

Deriving insights from data is a process that requires careful consideration and thorough analysis. This is because making mistakes during this process can lead to wrong conclusions, therefore creating problems for further decision-making processes. One of the most common data pitfalls when deriving insights from data is making mistakes while summing at various levels of aggregation. Aggregating data usually involves adding different values or components together, which can be difficult due to the fact that each component might have different units and require conversion to enable accurate summation. When adding values with different units, one can miscalculate results or draw wrong conclusions if they forget to convert the numbers into a single unit.

Another common pitfall related to deriving insights from data is calculating rates or ratios incorrectly. Rates and ratios allow us to compare two different sets of numbers in order to understand relationships between them; however, incorrect calculations can lead to misinterpretations and false conclusions about the underlying relationship. For example, when determining population growth rates, one must make sure that the time period used for both sets of numbers being compared is the same. Otherwise, it will not be possible to determine an accurate rate as one population value may include years that are not included in the other population value being used for comparison.

Comparing Data

Data comparison is an essential part of any statistical analysis, but it can also be fraught with potential pitfalls. When comparing data, one should be aware of certain data pitfalls that can lead to incorrect conclusions or a distorted understanding of the underlying patterns or relationships involved. Some common data pitfalls when comparing data include:

- Measuring central tendency: Measurement of central tendency (e.g., mean, median, and mode) can help identify patterns or trends in data; however, they can also skew results if the distribution is highly skewed or non-normal. A heavily skewed distribution can make it difficult to compare two datasets without taking into account outliers that may be influencing the measurement of central tendency.

- Using representative data samples: Comparing two datasets requires having a representative sample from each dataset in order to draw meaningful conclusions about the differences between them. If the samples used for comparison are not representative of the population from which they were drawn, then any differences observed could be due to sampling bias rather than actual differences between the populations being compared.

- Choosing incorrect method of comparison: Comparing datasets requires choosing an appropriate method of comparison such as correlation coefficient or regression analysis. Choosing an inappropriate comparison method can lead to inaccurate conclusions regarding the relationship between two datasets and invalidate any findings based on those comparisons.

- Overlooking factors that affect comparability: It is important to consider factors other than just numerical value when comparing two datasets as there may be qualitative factors at play that are not captured by numerical values alone. For example, demographic factors such as age or gender could have influenced a particular result and thus should be taken into consideration when making comparisons between datasets.

Analyzing Data

Data pitfalls related to analyzing data can be extremely costly, both in terms of time and resources. The following are few pitfalls:

- The most common one of the pitfalls is overfitting models to historical data, which means that a model has been trained on data that is too specific and not reflective of the population at large. This can lead to inaccurate predictions or results.

- Another common mistake is failing to recognize important signals in the data, such as seasonality or cyclical trends. Not taking these into account can cause errors when predicting future outcomes. Extrapolating or interpolating data incorrectly can also be problematic, leading to misinformed decisions; for example, drawing conclusions without verifying whether or not the assumptions being made are actually valid.

- Another common pitfall is relying on metrics that don’t really measure what they purport to measure—or aren’t even relevant—can also lead to inaccurate findings about a dataset.

Visualizing Data

Data visualization is an important aspect of data analysis, as it allows us to quickly and easily comprehend the information presented. Despite its many advantages, there are several pitfalls associated with visualizing data that can lead to inaccuracies or misinterpretation. One such pitfall is the selection of an inappropriate chart type for the task at hand. Choosing the wrong chart type can make it difficult or even impossible to accurately represent the data being visualized, leading to misleading results. For example, using a bar chart to display continuous data will result in a distorted image of the actual data points, thus obscuring real trends or insights that could otherwise be gleaned from a line graph.

Another potential pitfall when creating visuals is failing to consider how audience members would interpret them. Different people have different levels of understanding regarding charts and graphs, so it’s important for creators of visuals to think about how their audience would parse the information being presented before publishing anything publicly or sharing it with colleagues or clients. Without considering who will be viewing your work and tailoring your visuals accordingly, viewers could misinterpret key findings or draw inaccurate conclusions from what you’ve presented them with.

Conclusion

In today’s business world, data is everything. It can make or break a company. Having accurate information is critical to making sound decisions, but there are many pitfalls that can occur when working with data. This blog post has outlined some of the most common issues that arise and offered solutions for avoiding them. Next time you are considering data, remember to think about it as the truth, process it carefully, calculate cautiously, compare conservatively, and visualize thoroughly. Doing so will help you avoid inaccurate conclusions that could lead your business down the wrong path. Do you have any other tips for ensuring accuracy when working with data? Let me know in the comments below or reach out to me directly if you would like to know more.

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

- What is Embodied AI? Explained with Examples - May 11, 2025

- Retrieval Augmented Generation (RAG) & LLM: Examples - February 15, 2025

I found it very helpful. However the differences are not too understandable for me