Category Archives: Machine Learning

Covid-19 Machine Learning Use Cases

The covid-19 virus is a type of coronavirus. It has been linked to severe acute respiratory syndrome (SARS). The covid-19 virus can be contracted through contact with saliva or mucous from an infected person. Symptoms include fever, cough, sore throat, headache, muscle aches, and fatigue. There are several problems related to the Covid-19 pandemic which can be solved using machine learning/data science techniques. In this blog post, we will look into some of these Covid-19 use cases which can be solved using machine learning classification and clustering techniques. What are Covid-19 data sets publicly available? One of the datasets available for studying Covid-19 is GISAID data (https://www.gisaid.org/) that represents million …

Federated Analytics & Learning Explained with Examples

Federated learning is proposed as an alternative to centralized machine learning since its client-server structure provides better privacy protection and scalability in real-world applications. It is experiencing a fast boom with the wave of distributed machine learning and ever-increasing privacy concerns. With the increased computing and communicating capabilities of edge and IoT devices, applying federated learning on heterogeneous devices to train machine learning models is becoming a trend. The federated analytics approach enables extracting insights from data residing on different systems without requiring the data to be brought to the central location. By leveraging these different data sources, federated analytics can provide powerful insights in relation to different areas such …

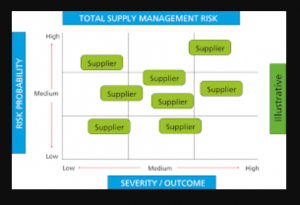

Supplier risk management & machine learning techniques

Supplier risk management (SRM) is a serious issue for procurement professionals. Suppliers can be unreliable, have poor quality products, or fail to meet specifications. In this blog post we will discuss AI / machine learning algorithms / techniques that you can use to manage supplier risk and make your procurement process more efficient. What is supplier risk management? Supplier Risk Management (SRM) also known as Supplier Risk Optimization (SRO), refers to policies and technology that enables organizations to manage risks related with suppliers. This can be done by analyzing data about past purchases from the supplier, predicting future risks related with purchases from this particular company. It’s crucial for procurement …

Key Deep Learning Techniques for Disease Diagnosis

The disease diagnosis process has been the same for decades- a physician would analyze symptoms, perform lab tests, and refer to medical diagnostic guidelines. However, recent advances in AI/machine learning / deep learning have made it possible for computers to diagnose or detect diseases with human accuracy. This blog post will introduce some machine learning / deep learning techniques that can be used by data scientists for training models related to disease diagnosis. What are different types of diseases that can be diagnosed using AI-based techniques? The following is a list of different types of diseases that can be diagnosed using machine learning or deep learning-based techniques: Cancer prognosis and …

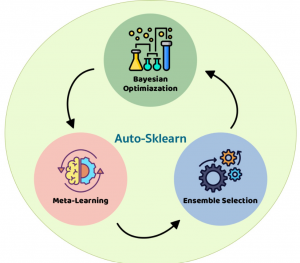

14 Python Automl Frameworks Data Scientists Can Use

In this post, you will learn about Automated Machine Learning (AutoML) frameworks for Python that can use to train machine learning models. For data scientists, especially beginners, who are unfamiliar with Automl, it is a tool designed to make the process of generating machine learning models in an automated manner, user-friendly, and less time-consuming. The goal of Automl is not just about making it easier for machine learning (ML) developers but also democratizing access to model development. What is AutoML? AutoML refers to automating some or all steps of building machine learning models, including selection and configuration of training data, tuning the performance metric(s), selecting/constructing features, training multiple models, evaluating …

Data Analytics – Different Career Options / Opportunities

Data analytics career paths span a wide range of career options, from data scientist to data engineer. Data scientists are often interested in what they can do with the data that is analyzed, while data engineers are more focused on the analysis itself. Whether you’re looking for a career as a data scientist, data analyst, ML engineer, or AI researcher, there’s something for everyone! In this blog post, we will different types of jobs and careers available to those interested in data analytics and data science. What are some of the career paths in data analytics? Here are different career paths for those interested in data analytics career: Data Scientists: …

Top 50 Interview Questions for Beginner Data Scientists

What interview questions should a beginner data scientist prepare for? This is an important question that many interviewees have. If you are going for a data scientist interview and don’t know what interview questions will you be asked, this blog post has some of the common interview questions that will help you excel in your interview. These interview questions are perfect for beginners because they cover basic topics about data science and machine learning and how it works. We hope this list helps! What is the difference between AI, machine learning, deep learning? Do you know how machine learning works? How is machine learning different from statistical modeling techniques like linear …

How to Create & Detect Deepfakes Using Deep Learning

Deepfake are becoming a more common occurrence in today’s world. What is deepfake and how can you create it using deep learning? This blog post will help data scientists learn techniques for creating and detecting deepfakes, so they can stay ahead of this technology. A deepfake is a video or audio that alters reality by changing the way something appears. For example, someone could place your face onto someone else’s body in a video to make it seem like you were there when you really weren’t. There are many ways that one can detect if a photo has been manipulated with software such as Photoshop or Gimp. What is deepfake? …

50+ Machine learning & Deep learning Youtube Courses

In this post, you get an access to curated list of 50+ Youtube courses on machine learning, deep learning, NLP, optimization, computer vision, statistical learning etc. You may want to bookmark this page for quick reference and access to these courses. This page will be updated from time-to-time. Enjoy learning! Course title Course type URL MIT 6.S192: Deep Learning for Art, Aesthetics, and Creativity Deep learning https://www.youtube.com/playlist?list=PLCpMvp7ftsnIbNwRnQJbDNRqO6qiN3EyH AutoML – Automated Machine Learning AutoML https://ki-campus.org/courses/automl-luh2021 Probabilistic Machine Learning Machine learning https://www.youtube.com/playlist?list=PL05umP7R6ij1tHaOFY96m5uX3J21a6yNd Geometric Deep Learning Geometric deep learning https://www.youtube.com/playlist?list=PLn2-dEmQeTfQ8YVuHBOvAhUlnIPYxkeu3 CS224W: Machine Learning with Graphs Machine learning https://www.youtube.com/playlist?list=PLoROMvodv4rPLKxIpqhjhPgdQy7imNkDn MIT 6.S897 Machine Learning for Healthcare Machine learning https://www.youtube.com/playlist?list=PLUl4u3cNGP60B0PQXVQyGNdCyCTDU1Q5j Deep Learning and Combinatorial Optimization Deep …

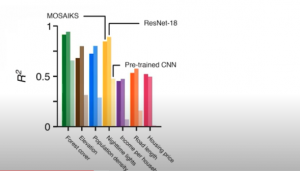

MOSAIKS for creating Climate Change Models

In this post, you will learn about the framework, MOSAIKS (Multi-Task Observation using Satellite Imagery & Kitchen Sinks) which can be used to create machine learning linear regression models for climate change. Here is the list of few prediction use cases which has already been tested with MOSAIKS and found to have high model performance: Forest cover Elevation Population density Nighttime lights Income Road length Housing price Crop yields Poverty mapping What is MOSAIKS? MOSAIKS provides a set of features created from Satellite imagery dataset. We are talking about 90TB of data gathered per day from 700+ satellites. These features can be combined with machine learning algorithms to address global …

Machine Learning for predicting Ice Shelves Vulnerability

In this post, you will learn about usage of machine learning for predicting ice shelves vulnerability. Before getting into the details, lets understand what is ice shelves vulnerability and how it is impacting global warming / climate change. What are ice shelves? Ice shelves are permanent floating sheets of ice that connect to a landmass. Most of the world’s ice shelves hug the coast of Antarctica. Ice from enormous ice sheets slowly oozes into the sea through glaciers and ice streams. If the ocean is cold enough, that newly arrived ice doesn’t melt right away. Instead it may float on the surface and grow larger as glacial ice behind it continues to flow into the …

Free Online Books – Machine Learning with Python

This post lists down free online books for machine learning with Python. These books covers topiccs related to machine learning, deep learning, and NLP. This post will be updated from time to time as I discover more books. Here are the titles of these books: Python data science handbook Building machine learning systems with Python Deep learning with Python Natural language processing with Python Think Bayes Scikit-learn tutorial – statistical learning for scientific data processing Python Data Science Handbook Covers topics such as some of the following: Introduction to Numpy Data manipulation with Pandas Visualization with Matplotlib Machine learning topics (Linear regression, SVM, random forest, principal component analysis, K-means clustering, Gaussian …

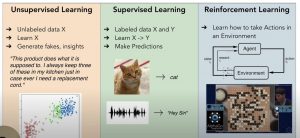

Different types of Machine Learning Problems

This post describes the most popular types of machine learning problems using multiple different images/pictures. The following represent various different types of machine learning problems: Supervised learning Unsupervised learning Reinforcement learning Transfer learning Imitation learning Meta-learning In this post, the image shows supervised, unsupervised, and reinforcement learning. You may want to check the explanation on this Youtube lecture video. Unsupervised Learning Problems In unsupervised learning problems, the learning algorithm learns about the structure of data from the given data set and generates fakes or insights. In the above diagram, you may see that what is given is the unlabeled dataset X. The unsupervised learning algorithm learns the structure of data …

Top 10+ Youtube AI / Machine Learning Courses

In this post, you get access to top Youtube free AI/machine learning courses. The courses are suitable for data scientists at all levels and cover the following areas of machine learning: Machine learning Deep learning Natural language processing (NLP) Reinforcement learning Here are the details of the free machine learning / deep learning Youtube courses. S.No Title Description Type 1 CS229: Machine Learning (Stanford) Machine learning lectures by Andrew NG; In case you are a beginner, these lectures are highly recommended Machine learning 2 Applied machine learning (Cornell Tech CS 5787) Covers all of the most important ML algorithms and how to apply them in practice. Includes 3 full lectures …

Scikit-learn vs Tensorflow – When to use What?

In this post, you will learn about when to use Scikit-learn vs Tensorflow. For data scientists/machine learning enthusiasts, it is very important to understand the difference such that they could use these libraries appropriately while working on different business use cases. When to use Scikit-learn? Scikit-learn is a great entry point for beginners data scientists. It provides an efficient implementation of many machine learning algorithms. In addition, it is very simple and easy to use. You can get started with Scikit-learn in a very easy manner by using Jupyter notebook. Scikit-learn can be used to solve different kinds of machine learning problems including some of the following: Classification (SVM, nearest neighbors, random …

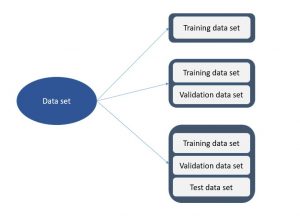

Machine Learning – Training, Validation & Test Data Set

In this post, you will learn about the concepts of training, validation, and test data sets used for training machine learning models. The post is most suitable for data science beginners or those who would like to get clarity and a good understanding of training, validation, and test data sets concepts. The following topics will be covered: Data split – training, validation, and test data set Different model performance based on different data splits Data Splits – Training, Validation & Test Data Sets You can split data into the following different sets and each data split configuration will have machine learning models having different performance: Training data set: When you …

I found it very helpful. However the differences are not too understandable for me