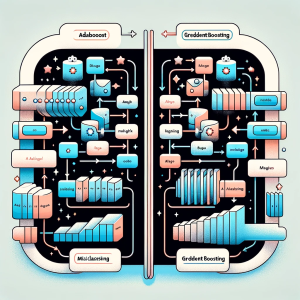

In this blog post we will delve into the intricacies of two powerful ensemble learning techniques: Gradient Boosting and Adaboost. Both methods are widely recognized for their ability to improve prediction accuracy in machine learning tasks, but they approach the problem in distinct ways.

Gradient Boosting is a sophisticated machine learning approach that constructs models in a series, each new model specifically targeting the errors of its predecessor. This technique employs the gradient descent algorithm for error minimization and excels in managing diverse datasets, particularly those with non-linear patterns. Conversely, Adaboost (Adaptive Boosting) is a distinct ensemble strategy that amalgamates numerous simple models to form a robust one. Its defining feature lies in adjusting the training data’s weights, amplifying the importance of incorrectly classified cases to ensure subsequent models prioritize them.

A striking difference between the Adaboost and Gradient Boosting algorithm is their approach to error correction: Gradient Boosting corrects errors in a more continuous, gradient descent manner, while Adaboost does it by adjusting weights in a discrete way.

Differences between Gradient Boosting & Adaboost Algorithm

The following are key differences between Adaboost and gradient boosting algorithm covering key aspects:

| Aspects | Gradient Boosting Algorithm | Adaboost Algorithm |

|---|---|---|

| Definition | An ensemble machine learning technique that builds models sequentially, correcting prior errors using gradient descent. | An ensemble method that combines weak learners into a strong one by reweighting training data. |

| When to Use | Best for data with complex patterns and non-linear relationships. Requires computational resources. | Suitable for classification problems, especially when dealing with binary outcomes. Less complex. |

| Python Implementation | Commonly implemented using libraries like scikit-learn (GradientBoostingClassifier or GradientBoostingRegressor). | Also implemented using scikit-learn (AdaBoostClassifier or AdaBoostRegressor). |

| R Implementation | Available through packages like gbm (Generalized Boosted Models) or xgboost (Extreme Gradient Boosting). | Can be implemented using the ada package, or boosting in the mboost package. |

| Advantages | Highly effective in predictive accuracy, handles various types of data including unstructured data. | Simple to implement, less prone to overfitting, and effective with less tweaking of parameters. |

| Disadvantages | More prone to overfitting, requires careful tuning of parameters, computationally intensive. | Less effective with complex, non-linear data. Sensitive to noisy data and outliers. |

| Loss Function Adaptability | Can optimize a variety of loss functions, making it flexible for different types of problems. | Typically uses exponential loss function, which may limit its adaptability compared to Gradient Boosting. |

| Handling Missing Values | Generally better at handling missing data, either inherently or through preprocessing. | Less effective with missing data; often requires complete data or imputation. |

| Speed and Scalability | Slower due to sequential model building; less scalable for very large datasets. | Faster, as weak learners are often simple and less computationally intensive. |

| Model Complexity | Often results in more complex models due to sequential improvement and continuous nature. | Generates simpler models, as each learner is typically a simple model like a decision stump. |

| Sensitivity to Outliers | Can be sensitive to outliers, as each model builds off the errors of the previous one. | Also sensitive to outliers, as misclassified points get more weight, but might be more robust with proper tuning. |

| Feature Importance Interpretation | Provides insights into feature importance, beneficial for understanding model behavior. | Less transparent in conveying feature importance due to its discrete weighting mechanism. |

| Use in Regression Problems | Well-suited for both classification and regression problems. | Primarily used for classification; not as common or effective in regression tasks. |

Check out my blogs on Adaboost and Gradient Boosting algorithms for learning greater details on them while also looking at their Python examples:

- The Watermelon Effect: When Green Metrics Lie - January 25, 2026

- Coefficient of Variation in Regression Modelling: Example - November 9, 2025

- Chunking Strategies for RAG with Examples - November 2, 2025

I found it very helpful. However the differences are not too understandable for me