Have you ever wanted to make an informed decision, but all you have is a small amount of non-parametric data? In the realm of statistics, we have various tools that enable us to extract valuable insights from such datasets. One of these handy tools is the Sign test, a beautifully simple yet potent method for hypothesis testing.

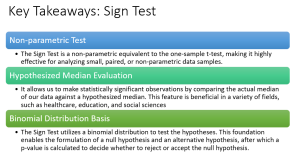

Sign test is a non-parametric test which is often seen as a cousin to the one-sample t-test, allows us to infer information about a whole population based on a small, paired sample. It is particularly useful when dealing with dichotomous data – Data that can have only two possible outcomes. In this blog post, we’ll demystify the Sign test, explain how to formulate hypotheses, walk through a real-life example, and demonstrate using Python code.

What is Sign Test?

The Sign test, a non-parametric test, is like a different version of the one-sample t-test that doesn’t need normal data. It’s used to check if the median of a sample has the hypothesized value. In other words, if the sample has hypothesized median. Lets understand this with example.

Suppose a teacher has implemented a new teaching methodology in the classroom and after an examination, he has got a set of test scores. He believes this new method has improved the students’ scores such that the median test score should now be 85 out of 100. The set of scores is as follows:

scores = [82, 88, 91, 78, 87, 85, 90, 88, 86, 84, 89, 91, 87, 80, 88]

In the above scenario, the null hypothesis (H0) would be that the median score is 85, while the alternative hypothesis (Ha) would be that the median score is not 85.

If you conduct a Sign test on this data, you would first calculate the differences between the observed scores and the hypothesized median, and then count how many differences are positive and how many are negative.

Positive differences signify scores above the hypothesized median, and negative differences represent scores below the median.

Here, the positive differences (scores higher than 85) are: [88, 91, 87, 90, 88, 86, 89, 91, 87, 88]. That gives us 10 positive differences.

The negative differences (scores lower than 85) are: [82, 78, 84, 80]. That gives us 4 negative differences.

Now, we use the sign test to check if our middle value (85) is a good guess for these scores. To do that, we look at which count is smaller: the positive differences or the negative differences. In this case, it’s the negative differences with a count of 4.

We use this count and the total count of non-zero differences (10 + 4 = 14) in a binomial formula to calculate the p-value. This p-value will tell us how likely we would see this distribution of differences if our guessed median was correct. if the p-value is small (usually less than 0.05), we would say that our guess for the median is not a good one. If the p-value is large, we’d say our guess could be a good estimate of the median.

If the null hypothesis is true, we expect roughly half the differences to be positive and half to be negative, leading to a binomial distribution with p = 0.5. If the observed data significantly deviates from this expectation (according to the calculated p-value), we would reject the null hypothesis, indicating that the new teaching method may have indeed affected the median score.

Sign Test Real-life Example

Suppose an agricultural researcher has developed a new organic fertilizer. He / She wants to test whether the use of this fertilizer increases the height of a particular type of plant compared to not using it. He / she selects 15 plants, and for each plant, measures the height growth after one month using the new fertilizer and the growth with no fertilizer. Here, we have a paired sample since the growths are recorded for the same plants under two different conditions.

The null hypothesis is that the median difference in plant growth with and without the fertilizer is zero, and the alternative hypothesis is that the median difference is not zero.

We subtract the growth without the fertilizer from the growth with the fertilizer for each plant, then note the sign of each difference (whether it’s positive or negative). If the new fertilizer has no effect, we would expect approximately an equal number of positive and negative differences.

We then count the number of positive and negative differences and use these counts to calculate the test statistic. If the sign test is significant (p-value < 0.05, for instance), you would reject the null hypothesis and conclude that the new fertilizer does affect plant growth. If not, you would fail to reject the null hypothesis, and it would remain plausible that the fertilizer does not have an effect on plant growth.

Sign Test Python Code Example

let’s assume we have collected paired data from 20 individuals, represented as before and after measurements for a diet program.

Our null hypothesis, H0, is that the median of the differences between before and after weights is zero. The alternative hypothesis, H1, is that the median difference is not zero, implying that the diet program has an effect on weight.

Here is the hypothetical data:

| Individual | Before (lb) | After (lb) | Difference (lb) | Sign |

|---|---|---|---|---|

| 1 | 200 | 195 | -5 | – |

| 2 | 220 | 210 | -10 | – |

| 3 | 210 | 215 | 5 | + |

| 4 | 180 | 175 | -5 | – |

| 5 | 170 | 165 | -5 | – |

| 6 | 220 | 225 | 5 | + |

| 7 | 210 | 210 | 0 | 0 |

| 8 | 190 | 195 | 5 | + |

| 9 | 200 | 200 | 0 | 0 |

| 10 | 180 | 180 | 0 | 0 |

| 11 | 190 | 185 | -5 | – |

| 12 | 220 | 215 | -5 | – |

| 13 | 200 | 205 | 5 | + |

| 14 | 210 | 210 | 0 | 0 |

| 15 | 220 | 225 | 5 | + |

| 16 | 200 | 195 | -5 | – |

| 17 | 180 | 175 | -5 | – |

| 18 | 190 | 195 | 5 | + |

| 19 | 210 | 210 | 0 | 0 |

| 20 | 200 | 195 | -5 | – |

From this, we see that there are 7 positive signs (+), 9 negative signs (-), and 4 zeros. Zeros are generally discarded in a sign test, so our total N for the test is 16.

For a two-tailed sign test, our null hypothesis posits that the number of positive and negative signs are equal, i.e., the median difference is zero.

The test statistic follows a binomial distribution with parameters N = 16 and p = 0.5 (under the null hypothesis of no difference). We want to know the probability of observing 7 or fewer positive differences assuming the null hypothesis, i.e., P(X <= 7) where X ~ B(16, 0.5).

The following python code can be used to calculate the p-value and arrive at the conclusion on whether to reject the null hypothesis or otherwise.

import numpy as np

from scipy import stats

# Define before and after weights

before_weights = np.array([200, 220, 210, 180, 170, 220, 210, 190, 200, 180, 190, 220, 200, 210, 220, 200, 180, 190, 210, 200])

after_weights = np.array([195, 210, 215, 175, 165, 225, 210, 195, 200, 180, 185, 215, 205, 210, 225, 195, 175, 195, 210, 195])

# Calculate differences

differences = after_weights - before_weights

# Remove zeros (no change)

differences = differences[differences != 0]

# Calculate the number of positive (weight gain) and negative (weight loss) differences

n_pos = np.sum(differences > 0)

n_neg = np.sum(differences < 0)

# We use the smaller of n_pos and n_neg as our test statistic (for a two-tailed test)

n = np.min([n_pos, n_neg])

# Calculate p-value (two-tailed) using the binomial test

p_value = stats.binom_test(n, n=n_pos + n_neg, p=0.5, alternative='two-sided')

print(f'p-value: {p_value}')

# Interpret the p-value

if p_value < 0.05:

print("We reject the null hypothesis: the diet program appears to have a significant effect on weight.")

else:

print("We fail to reject the null hypothesis: the diet program does not appear to have a significant effect on weight.")

The above code works by first calculating the weight difference for each individual, then conducting the sign test on these differences.

Conclusion

The Sign test provides a powerful and flexible tool for researchers and data analysts dealing with small, paired, or non-parametric data samples. Its use as a non-parametric equivalent to the one-sample t-test means that we can still make statistically significant observations about our data even when it doesn’t follow a specific distribution. This means even with limited data or data that doesn’t fit the ‘norm’, we can still gain valuable insights and make informed decisions. From educators tracking student performance to healthcare professionals monitoring patient recovery, the Sign test allows us to evaluate the median of our data against a hypothesized median, making it an invaluable part of our statistical toolkit. So, whether you’re a seasoned data scientist or a curious beginner in the field of statistics, understanding and applying the Sign test can open up new possibilities in your data analysis journey.

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

- What is Embodied AI? Explained with Examples - May 11, 2025

I found it very helpful. However the differences are not too understandable for me