This article represents some of the key steps required to train a neural network. Please feel free to comment/suggest if I missed to mention one or more important points. Also, sorry for the typos.

Key Steps for Training a Neural Network

Following are 7 key steps for training a neural network.

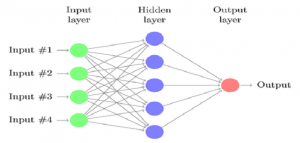

- Pick a neural network architecture. This implies that you shall be pondering primarily upon the connectivity patterns of the neural network including some of the following aspects:

- Number of input nodes: The way to identify number of input nodes is identify the number of features.

- Number of hidden layers: The default is to use the single or one hidden layer. This is the most common practice.

- Number of nodes in each of the hidden layers: In case of using multiple hidden layers, the best practice is to use same number of nodes in each hidden layer. In general practice, the number of hidden units is taken as comparable number to that of number of input nodes. That means one could take either the same number of hidden nodes as input nodes or maybe twice or thrice the number of input nodes.

- Number of output nodes: The way to identify number of output nodes is to identify the number of output classes you want the neural network to process.

- Random Initialization of Weights: The weights are randomly intialized to value in between 0 and 1, or rather, very close to zero.

- Implementation of forward propagation algorithm to calculate hypothesis function for a set on input vector for any of the hidden layer.

- Implementation of cost function for optimizing parameter values. One may recall that cost function would help determine how well the neural network fits the training data.

- Implementation of back propagation algorithm to compute the error vector related with each of the nodes.

- Use gradient checking method to compare the gradient calculated using partial derivatives of cost function using back propagation and using numerical estimate of cost function gradient. The gradient checking method is used to validate if the implementation of backpropagation method is correct.

- Use gradient descent or advanced optimization technique with back propagation to try and minimize the cost function as a function of parameters or weights.

Latest posts by Ajitesh Kumar (see all)

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me