In this blog, we will learn about a comprehensive framework for the deployment of generative AI applications, breaking down the essential components that architects must consider. Learn more about this topic from this book: Generative AI on AWS.

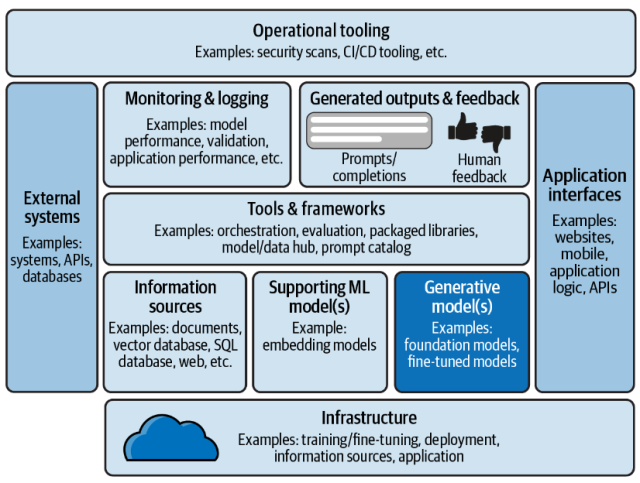

The following is a solution / technology architecture that represents a blueprint for deploying generative AI applications.

The following is an explanation of the different components of this architectural viewpoint:

- Generative Models: The Creative Engine – The choice of generative model—be it a foundation model or a fine-tuned variant—is central to the application’s ability to create relevant and high-quality outputs. You must balance the need for advanced capabilities with considerations of computational efficiency and cost. Cloud services such as Amazon Bedrock, Google Vertex AI, and Azure OpenAI services can be used for working with generative models.

- Supporting ML Models: The Cognitive Core – Supporting machine learning models such as embedding models plays a critical role in understanding and processing the input data. These models need to be selected and trained with care, ensuring they align with the application’s objectives. They form the cognitive core of the application, enabling it to understand the nuances of the data it processes. Cloud services such as Amazon Bedrock, Amazon SageMaker JumpStart, and Azure Machine Learning can be used for working with ML models.

- Generated Outputs & Feedback: Completing the Loop – Generative AI applications are unique in their ability to generate new content. Whether it’s text, images, or code, the outputs generated by these generative models must be carefully monitored. You need to create mechanisms for capturing human feedback, which is essential for the iterative improvement of generative AI models. By incorporating user feedback directly into the model training cycle, the application can continuously learn and adapt, thus improving the relevance and quality of its outputs.

- Information Sources: Fuel for Generative AI Applications – The quality of a generative AI application’s output is only as good as the information it has been trained on. This makes the choice of information sources—from documents and databases to live web content—crucial. You must ensure that the data pipelines are robust and the sources are reliable, diverse, and ethically sourced to avoid biases and inaccuracies in the generated content.

- Tools & Frameworks: The Building Blocks of AI – Choosing the right tools and frameworks is key to creating quality generative AI applications. This would mean selecting the right orchestration tools, evaluation frameworks, and data management systems. These tools must be scalable, reliable, and compatible with the overall architecture of the application. Furthermore, having a model or data hub and a prompt catalog can streamline the development process. Cloud services such as Azure Machine Learning, Vertex AI, and Amazon SageMaker JumpStart can help to simplify the machine learning workflow, which includes model training, tuning, and deployment, with a focus on enabling the use of generative AI applications.

- Application Interfaces: The User Experience Frontier – The interfaces through which users interact with generative AI applications—be it websites, mobile apps, or APIs—must be designed with user experience at the forefront. You should ensure that these interfaces are intuitive and provide seamless access to the application’s capabilities. The design should also be flexible to accommodate the diverse ways in which users might want to interact with the AI’s outputs.

- Operational Tooling: Ensuring Reliability and Security – The focus areas include security scans, continuous integration (CI), and continuous deployment (CD) tooling to safeguard the application against vulnerabilities and ensure that updates are rolled out smoothly. This would mean designing a system where operational tools are integrated seamlessly into the workflow, allowing for automated testing and deployment processes that maintain the integrity of the application.

- Monitoring & Logging: The Pulse of Your Application – Monitoring & logging provide invaluable insights into model performance, validation, and overall application health. By designing an architecture that prioritizes comprehensive monitoring and logging, you can ensure that any issues are quickly identified and addressed. This proactive approach to application maintenance not only prevents downtime but also informs iterative improvements to the system. Services such as Amazon Cloudwatch, and Azure Monitor can be used.

- External Systems: Expanding Capabilities through Integration – Generative AI applications would need to interact with external systems such as third-party APIs and databases to enhance their capabilities. This would mean designing an architecture that is not only compatible with these external systems but also secure and scalable. The ability to integrate with a wide array of systems can significantly expand the application’s functionality and reach.

- Infrastructure: The Foundation of AI Applications – Lastly, the infrastructure underpinning generative AI applications must be robust and scalable. It includes the hardware and software that facilitate the training, fine-tuning, deployment, and operation of the application. You must ensure that the infrastructure can handle the demands of complex AI models and large-scale data processing without compromising performance.

Latest posts by Ajitesh Kumar (see all)

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

- What is Embodied AI? Explained with Examples - May 11, 2025

I found it very helpful. However the differences are not too understandable for me