In this post, you will learn about some great new and updated machine learning services which have been launched at AWS re:Invent Conference Nov 2018. My personal favorite is Amazon Textract.

- Amazon Personalize

- Amazon Forecast

- Amazon Textract

- Amazon DeepRacer

- Amazon Elastic inference

- AWS Inferentia

- Updated Amazon Sagemaker

Amazon Personalize for Personalized Recommendations

Amazon Personalize is a managed machine learning service by Amazon with the primary goal to democratize recommendation system benefitting smaller and larger companies to quickly get up and running with the recommendation system thereby creating the great user experience. Here is the link to Amazon Personalize Developer Guide. The following are some of the highlights:

- Helps personalize the user experience using some of the following for each and every users:

- Personalize product and content recommendations for effective products & content (articles, videos, audios etc) discovery based on the product and content engagement habits of similar users. It would help enhance the overall user experience by enabling higher user engagement.

- Personalized promotional offers to show most appropriate promotional offers based on past history of promotions which resulted in the conversion.

- Quick & easy to build and deploy: Quick and easy to build the personalized models and deploy the same.

- API-based integration: Ease of integration with personalized model across different channels and devices including websites, mobile apps etc using simple API calls.

Under the Hood

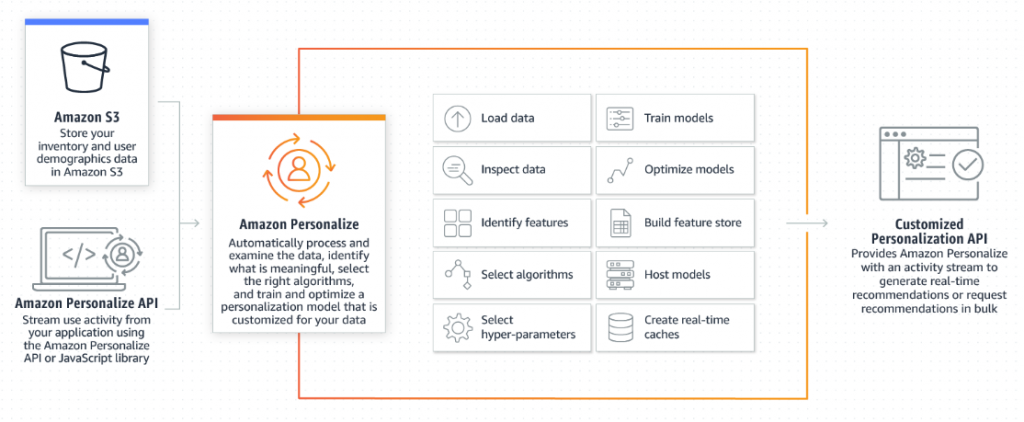

It includes AutoML capabilities that take care of loading the data, selecting the most appropriate machine learning algorithms, train the model and provide the accuracy metrics. Here is the technology architecture diagram representing the service:

Amazon Personalize Technology Architecture (Image Courtesy: Amazon)

Like any machine learning model, the following flow is followed for Amazon Personalize ML services:

- Gather and import training data to the dataset group.

- Build the model (solution) using the most appropriate ML algorithm. An algorithm is a mathematical expression that when trained becomes the model. The algorithm contains parameters whose unknown values are determined by training on input data. Hyperparameters are external to the algorithm and specify details of the training process, such as the number of training passes to run over the complete dataset or the number of hidden layers to use.

- Evaluate the trained model using metrics, and update.

- Deploy the solution (model)

- Get recommendations for users using Amazon Personalize Runtime.

- Continue to update the training model based on customer activity. One could use Amazon Personalize Events component.

Who would find the Amazon Personalize service very useful?

- Content focused websites such as video, audio portals,

- News websites displaying users the news which he/she would like to see based on his past news reading behavior

- eCommerce portals

Amazon Forecast for Time-series Forecasting

Amazon Forecast is a managed machine learning time-series forecasting service for delivering highly accurate forecasts.

The following are some of the algorithms supported for creating time-series forecasting models:

- ARIMA (Makes use of autocorrelations in the data); ARIMA stands for AutoRegressive Integrated Moving Average. Here are some good reads on ARIMA models:

- Exponential smoothing(ETC) (Makes use of the description of the trend and seasonality in the data). Exponential smoothing techniques do forecast based on the weighted averages of past observations, with the weights decaying exponentially as the observations get older. Here is a good read on exponential smoothing techniques

- DeepAR+: A supervised learning algorithm for forecasting scalar (one-dimensional) time series with auto-regressive recurrent neural networks (RNN). Could be used for making predictions in relation to reducing excess inventory in supply chains. This is recommended to be used when the dataset contains hundreds of related time series. This is unlike individual time series which are trained/fit using ARIMA and ETS models. Good read on Arxiv, DeepAR: Probabilistic Forecasting with Autoregressive Recurrent Networks

- Mixture Density Networks: A probabilistic deep neural network algorithm used for time-series forecasting.

- Multi-Quantile Recurrent Neural Network (MQRNN): An interesting paper on a multi-horizon quantile recurrent forecaster

- Non-parametric time series (NTPS): Similar to classical forecasting methods, such as exponential smoothing (ETS) and autoregressive integrated moving average (ARIMA), NPTS generates predictions for each time series individually. It predicts the future value distribution of a given time series by sampling from past observations.

- Prophet: Based on the Facebook Open source forcasting tool, Prophet can be used for business forecasting tasks having the following characteristics:

- Hourly, daily, or weekly observations with at least a few months (preferably a year) of history

- Strong multiple “human-scale” seasonalities: day of week and time of year

- Important holidays that occur at irregular intervals that are known in advance (e.g. the Super Bowl)

- A reasonable number of missing observations or large outliers

- Historical trend changes, for instance due to product launches or logging changes

- Trends that are non-linear growth curves, where a trend hits a natural limit or saturates

- Spline Quantile Forecaster: A recurrent neural network (RNN)-based supervised learning algorithm for probabilistic forecasting of scalar (one-dimensional) time series.

Under the Hood

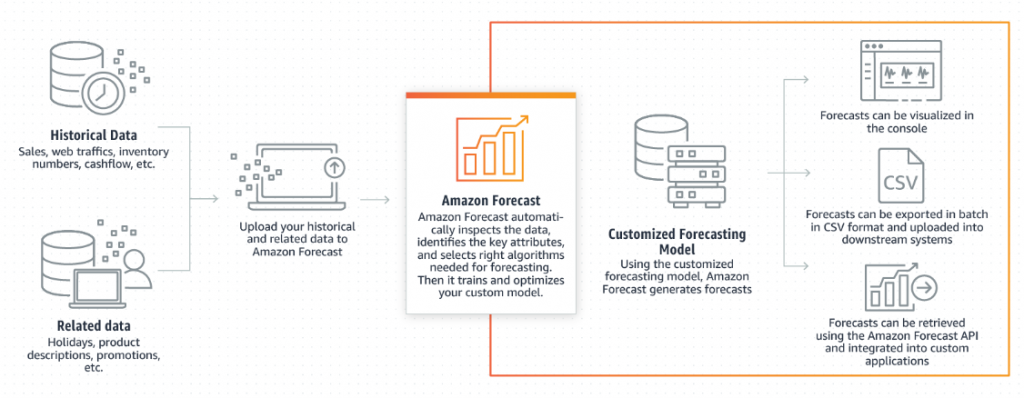

Amazon Forecast includes AutoML capabilities thereby doing some of the following once the data gets loaded in S3 – automatically load and inspect the data, select the right algorithms, train a model, provide accuracy metrics, and generate forecasts. The following represents the technology architecture of Amazon Forecast service. Pay attention to the different output supported in form of visualization in the AWS dashboard, API and CSV.

Figure 2. Amazon Forecast Technology Architecture (Image Courtesy: Amazon)

Amazon Textract for Document Scanning (OCR)

Amazon textract is a fully managed service that automatically extracts text and data from scanned documents. it goes beyond simple optical character recognition (OCR) to also identify the contents of fields in forms and information stored in tables. The following are some of the reasons why Textract looks like a very attractive and useful service:

- Trained on millions of documents from almost every industry, including invoices, receipts, contracts, tax documents, sales orders, enrollment forms, benefits applications, insurance claims, policy documents

- Cost effective as one could scan 1000 docs for $1.50. The best part is you only pay for what you use. There are no upfront commitments or long-term contracts.

The following are some of the features of Textract service:

- Automatically detect printed text and numbers in a scan or rendering of a document using OCR

- Automatically detect key-value pairs in document images such that the inherent context of the document without any manual intervention.

- Preserves the composition of data stored in tables during extraction. Very useful documents such as invoices, receipts, medical records, financial records etc.

- Extracted data is returned with bounding box coordinates for finer analysis

- A confidence score for the scanned document to enable users to choose whether they want to use the data.

Who would find the Amazon Textract service very useful?

The following are some of the business areas where this service will be very useful:

- Account Receivables/Account Payables: In account receivables, there is a need to scan checks and invoice and related remittance pages sent by the buyers. OCR services such as Abby, Nuance are used for scanning such documents and extracting the text from these documents. In the AR domain, Amazon Textract will prove to be very useful.

- Banking: In banking, there are a lot of checks which need to be scanned at the regular intervals. This service will also be very useful in such cases.

Amazon DeepRacer

For data scientists planning to get into reinforcement learning, Amazon DeepRacer provides a way to get hands-on with RL, experiment, and learn through autonomous driving. One could get started with the virtual car and tracks in the cloud-based 3D racing simulator. For a real-world experience, one can deploy your trained models onto AWS DeepRacer.

One could build models in Amazon SageMaker and train, test, and iterate quickly and easily on the track in the AWS DeepRacer 3D racing simulator.

Amazon Elastic Inference

Amazon Elastic Inference allows adding GPU acceleration to any Amazon EC2 instance and Amazon SageMaker instances for faster inference at a lower cost. Making predictions (inferences) using a trained machine learning model drives as much as 80-90% of the compute costs of the application. Using this service, one can reduce inference costs by up to 75% by attaching GPU-powered inference acceleration to Amazon EC2 and Amazon SageMaker instances.

Currently, the following frameworks are supported:

- TensorFlow

- Apache MXNet

- Open Neural Network Exchange (ONNX) models

AWS Inferentia

AWS Inferentia is a machine learning inference chip designed to deliver high performance at low cost. It supports the TensorFlow, Apache MXNet, and PyTorch deep learning frameworks, as well as models that use the ONNX format. AWS Inferentia (dedicated chip) helps when prediction workloads require an entire GPU or have extremely low latency requirements. It provides high throughput, low latency inference performance at an extremely low cost. Each chip provides hundreds of TOPS (tera operations per second) of inference throughput to allow complex models to make fast predictions.

Amazon SageMaker (Updated)

Amazon Sagemaker has been further enhanced to support some of the following:

- Reinforcement learning support

- Easier to build ML models

- Amazon Sagemaker Neo: This service is aimed to enable developers to train machine learning models once and run them anywhere in the cloud and at the edge. Amazon SageMaker Neo optimizes models to run up to twice as fast, with less than a tenth of the memory footprint, with no loss in accuracy.

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me