Last updated: 19th April, 2024

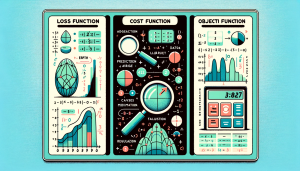

Among the terminologies used in training machine learning models, the concepts of loss function, cost function, and objective function often cause a fair amount of confusion, especially for aspiring data scientists and practitioners in the early stages of their careers. The reason for this confusion isn’t unfounded, as these terms are similar / closely related, often used interchangeably, and yet, they are different and serve distinct purposes in the realm of machine learning algorithms.

Understanding the differences and specific roles of loss function, cost function, and objective function is more than a mere exercise in academic rigor. By grasping these concepts, data scientists can make informed decisions about model architecture, optimization strategies, and ultimately, the effectiveness of their predictive models. In this blog, we’ll explore each term with real-world examples and mathematical explanations, highlighting their unique roles and interdependencies.

Loss Function

A loss function evaluates the performance of a model on a single data point by comparing the model’s prediction with the actual (ground truth) value, thereby computing a penalty for that individual prediction. Let’s take an example of a machine learning model predicting the temperature for a single day. If the actual temperature is 20°C and the model predicts 22°C, the loss function calculates the error for this specific prediction.

Mathematical Representation:

- Mean Squared Error (MSE) for a single data point where L represent the loss function:

- Formula: $L(y, \hat{y}) = (y – \hat{y})^2$

- Here, y is the actual value and and $\hat{y}$ is the predicted value.

- For our temperature example: y=20, $\hat{y}$ =22, so L = $(20−22)^2$=4.

Cost Function

A cost function evaluates the overall performance of the model across the entire dataset (or a batch from it). It aggregates the loss penalties of all individual data points, and may include additional constraints or penalties (like L1 or L2 regularizations). Let’s understand with the same example of the machine learning model predicting the temperature for a single day. The cost function assesses the model’s performance over the entire month’s data.

Mathematical Representation:

- Average MSE for the entire dataset:

- Formula: $J = \frac{1}{N} \sum_{i=1}^{N} (y_i – \hat{y_i})^2$

- N is the number of days in the dataset.

- J represents the cost function calculated as the average MSE over the dataset

- $y_i$ is the actual value of the i-th data point, and $\hat{y_i}$

- Calculates the mean of the squared differences between the actual and predicted values for all data points in the dataset.

- Incorporating Regularization:

- L1 (Lasso) adds the sum of the absolute values of the coefficients.

- L2 (Ridge) adds the sum of the squares of the coefficients.

Objective Function

An objective function is a broader term in optimization, encompassing cost functions and potentially other goals unrelated to the direct minimization of the error. It can include optimization goals like sparsity of model coefficients or their minimization, as seen in L1 and L2 regularizations. Unlike loss and cost functions, which typically focus on minimization, an objective function can be designed for either maximization or minimization.

In a machine learning problem where the goal is not only to minimize prediction error but also to ensure a sparse representation of the model (few non-zero coefficients), the objective function would include both these aspects.

Mathematical Representation:

- Combining Cost Function and Additional Goals:

- Formula: Objective Function = Cost Function + Regularization term + …

- For instance, combining MSE with L1 regularization for sparsity: Objective=J+λ∑∣coefficients∣

- Here, λ is a regularization parameter that balances the two goals.

In summary, while a loss function calculates the penalty for a single prediction, a cost function aggregates these over a dataset and may include additional penalties. An objective function is a more encompassing term that includes cost functions and other optimization goals, allowing for both maximization and minimization objectives in the context of machine learning training.

Quick Tutorial on Loss Function / Cost Function

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me