Last updated: 27th Jan, 2024

Training an AI / Machine Learning model as sophisticated as the one used by ChatGPT involves a multi-step process that fine-tunes its ability to understand and generate human-like text. Let’s break down the ChatGPT training process into three primary steps. Note that OpenAI has not published any specific paper on this. However, the reference has been provided on this page – Introducing ChatGPT.

Fine-tuning Base Model with Supervised Learning

The first phase starts with collecting demonstration data. Here, prompts are taken from a dataset, and human labelers provide the desired output behavior, which essentially sets the standard for the AI’s responses. For example, if the prompt is to “Explain reinforcement learning to a 6-year-old,” the labeler would craft an explanation that’s comprehensible at that level.

This demonstration data is then used to fine-tune a base model like GPT-3.5 through supervised learning. In this stage, the model learns to predict the next word in a sequence, given the previous words, and aims to mimic the demonstrated behavior.

Reward Modeling based on Human Labelling

The next step involves collecting comparison data. The ChatGPT model generates several sample outputs in response to a prompt, and human labelers then rank these from best to worst. This ranking helps the ChatGPT model understand the nuances of what makes a response more valuable or appropriate than another.

This ranked data is crucial in training a reward model. This model learns to predict the quality of the ChatGPT outputs based on the human labeler’s rankings. Essentially, it’s a guide that helps the ChatGPT understand the preferences and values reflected in human judgments.

A reward model is typically a classifier that predicts one of two classes—positive or negative. These are often called binary classifiers and are often based on smaller language models like BERT. Many language-aware binary classifiers already exist to classify sentiment or detect toxic language. Training a custom reward model is a relatively labor-intensive and costly endeavor. One should explore existing binary classifiers before committing to this effort. You may want to check out details in the book, Generative AI on AWS. Here are some of the steps to train a custom reward model:

- The first step to training a custom reward model is to collect data from humans on what is helpful, honest, and harmless. This is called collecting human feedback from human annotators, or labelers. In a generative context, it’s common to ask human annotators to rank various completions for a given prompt from 1 to 3 in terms of most helpful to least helpful, most honest to dishonest, and most harmful to harmless. Services such as Amazon SageMaker Ground Truth can be used for this purpose.

- The next step is to convert this data into a format used to train the reward model to predict either a positive reward (1) or a negative reward (0). In other words, you need to convert rankings 1 through 3 into 0s and 1s. This training data is used to train the reward model that will ultimately predict a reward for a generated completion during the RL fine-tuning process described in the next section.

- Finally, the reward model can be trained using a BERT-based text classifier trained to predict the probability distribution across two classes—positive (1) and negative (0)—for a given prompt-completion pair. The class with the highest probability is the predicted reward:

Reinforcement Learning for Improving Output

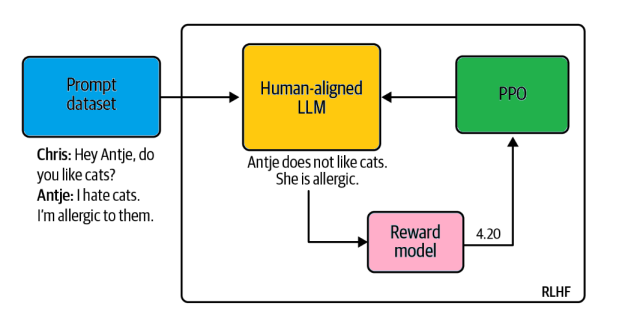

Finally, we come to the reinforcement learning phase, where the reward model is used to further train the ChatGPT model using the Proximal Policy Optimization (PPO) algorithm. Here’s how it works:

- A new prompt is sampled from the dataset.

- The ChatGPT model, initialized with the supervised policy, generates an output.

- The reward model evaluates this output and assigns a reward.

- This reward is used to update the ChatGPT model’s policy, encouraging it to generate better outputs in the future.

The following is the depiction of how PPO RL algorithm works with LLM in RLHF. As the name suggests, PPO optimizes a policy, in this case, the LLM, to generate completions that are more aligned with human values and preferences. With each iteration, PPO makes small and bounded updates to the LLM weights—hence the term Proximal Policy Optimization.

This cycle continues with the ChatGPT model progressively refining its responses to be more aligned with human preferences.

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me