In this post, you will learn about different artificial intelligence (AI) use cases of Telemedicine / Telehealth including some of key implementation challenges pertaining to AI / machine learning. In case you are working in the field of data science / machine learning, you may want to go through some of the challenges, primarily AI related, which is thrown in Telemedicine domain due to upsurge in need of reliable Telemedicine services.

What is Telemedicine?

Telemedicine is the remote delivery of healthcare services, using digital communication technologies. It has the potential to improve access to healthcare, especially in remote or underserved communities. It can be used for a variety of purposes, including the management of chronic conditions, the provision of mental health services, and the delivery of primary care. Telemedicine has the potential to improve access to care, increase efficiency, and reduce healthcare costs. Telemedicine can be used for a wide range of services, including consultation, diagnosis, and treatment. It can also be used for monitoring and follow-up care. Telemedicine can be delivered via phone, video call, or internet-based applications. The use of digital health technologies in telemedicine is growing, as these technologies offer new ways to improve the quality and efficiency of healthcare delivery. Telemedicine is an important part of the future of healthcare, and its use is expected to continue to grow in the years to come.

AI / Machine learning use cases for Telemedicine

The following represents some of the important AI / machine learning use cases for Telemedicine:

- Telemonitoring: AI can be used to monitor patients remotely, checking their vital signs and providing early detection of potential health problems. The vital signs could include blood pressure, pulse rate, respiratory rate, blood oxygen level, weight, and body temperature. Machine learning classification models can help to identify which patients are at risk of certain conditions and need further monitoring. There are devices which can send the data directly to the Telemedicine system for analysis. Alternatively, patients would be required to key in or input the data into the system at regular intervals.

- Diagnosis: AI can be used to assist in the diagnosis of patients by providing recommendations based on symptoms and medical history. AI can be used for analyzing images, such as X-rays, CT scans, diagnostic test results, etc. Patients would be required to upload the images to a secure server, and the AI system would provide recommendations to the physician. The cloud based solutions would prove to be scalable and secure for Telemedicine.

- Treatment plans: AI can be used to develop personalized treatment plans for patients based on their individual needs and medical history. The system would take into account the patient’s preferences, such as type of treatment, location, etc. Machine learning algorithms can be used to identify which treatments are most effective for each patient. These are just a few examples of how AI and machine learning can be used in Telemedicine.

- Patient engagement: AI can be used to improve patient engagement by providing reminders for appointments, medication adherence, and follow-up care. In addition, AI chatbots can provide answers to common questions and help to schedule appointments.

- Chronic disease management: AI can be used to support the management of chronic diseases such as diabetes, hypertension, and heart disease. This can be done through the use of Telemedicine applications which provide personalized care plans and reminders, track progress, and offer feedback to patients. Machine learning can be used to predict patient outcomes, such as the likelihood of developing complications, and to identify early warning signs.

Telemedicine Challenges: AI, Data, Cloud-based implementations

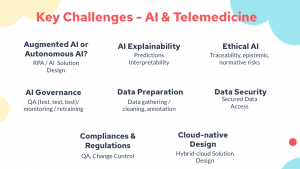

Here are the key challenges which need to be addressed to take full advantage of AI, RPA and cloud computing while delivering Telemedicine services:

Augmented AI or Autonomous AI?

AI application solution design will be key to decide whether the predictions served by the machine learning models could be used to automate the workflow without doctors’ interference (autonomous AI) or assist the doctors in making the final decisions. Let’s say a deep learning model is used to predict whether a person is suffering from a disease or not. The solution design must include whether the decision making can be automated or whether doctors are still asked to take the final decision based on the prediction.

AI Explainability

Given that doctors would like to know the values of attributes based on which predictions are made. This will require AI model based solution design to make a trade-off between whether to use complex algorithm whose predictions are accurate but explainability (prediction attributes) is not possible or use algorithm with lesser model performance but predictions explainability is possible.

Apart from explainability at individual prediction level, AI Explainability also includes selection of appropriate metrics which represent the model performance vis-a-vis solution outcomes.

Ethical AI

Ethical AI challenges include some of the following to be considered when doing Telemedicine AI application solution design:

- Traceability risks: In case of incorrect predictions resulting in possible conflict, who will be held accountable? Will it be AI application, doctors or hospital.

- Normative risks: In case of incorrect predictions, the downstream applications will behave in different manner leading to possible conflicts. One would require to watch out for related risks.

- Epistemic risks: One would want to make sure how to come up with most optimal model with optimal performance such that inconclusive outcomes can be avoided in the first place.

AI Governance

Given the need to have models which are highly performant at all point in time, there is required strong AI governance practices to be put in place including some of the following:

- Test, Test, Test: The need is to test the model with different kinds of data including adversarial dataset to assess the model performance at regular intervals. The dataset for which model does not perform well would need to be included for retraining the models.

- Model monitoring: Model performance needs to be monitored at regular intervals including daily, weekly or monthly based on inflow of data, data distribution etc.

- Model retraining: Based on the model performance, model would require to be retrained where some of the following can happen:

- One or more new features may get included

- One or more new models may get included

- Machine learning algorithm may change

- Hyper-parameters may get tuned

Data Preparation

Data preparation is going to be key when building models to meet telemedicine requirements. This includes some of the following aspects:

- Data gathering

- Data cleansing

- Data annotation

Data Security

Data security is going to be one of the most important challenges when building models for healthcare requirements. Patients data are critical and there are compliances and regulations in place for safety of patients data. Some of the following data security controls would need to be put in place:

- Controlled access to data to internal stakeholders including data scientists

- No access to data by external sources unless compliance requirements are met.

- Data security requirements for data at rest and in transition need to be met.

Compliances & Regulations

One of the key compliance related issue when dealing with machine learning models is change-control. When new models are ready to be moved into production, as per compliance / regulation requirements, several aspects of change would need to be documented and approved by change / risk control board. And, doing this for machine learning models would become tricky as they are different beast than the regular software development.

Cloud-native Design

Finally, in order to meet telemedicine requirements, one would need to adopt cloud-native design of telemedicine applications to support the need to have parts of application deployed in cloud and other part deployed on-premise. The idea is that the solution design need to support hybrid-cloud architecture for both applications and data.

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me