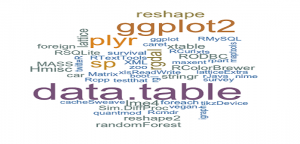

This article represents a comprehensive list of 60 most commonly used R packages which helps to achieve some of the following objectives when working with data science/analytics projects:

- Predictive modeling

- Data handling/manipulation

- Visualization

- Integration

- Hadoop

- GUI

- Database

60 Most Commonly Used R Packages

Following is the list of 60 or so R packages which help take care of different aspects when working to create predictive models:

- Predictive Modeling: Represents packages which help in working with various different predictive models (linear/multivariate/logistic regression models, SVM, neural network etc.)

- caret: Stands for Classification And REgression Training. Provides a set of functions which could be used to do some of the following when working with classification and regression problems. Depends upon number of packages and loads these packages appropriately (on-demand) to achieve above objectives.

- Data processing (splitting)

- Feature selection

- Evaluate Model tuning parameters based on resampling

- Predictor variable importance estimation

- Estimate model performance from the training set

- lars: Work with Least Angle Regression algorithm which provides means and ways of producing an estimate of most appropriate predictor variables and the related coefficients.

- gbm: Stands for Generalized Boosting Regression Models. Based on decision tree algorithm, GBM package provides methods for solving problems related with regression and classification. It accomodates boosting in which multiple weak models are combined algorithmically to create a better model.

- zoo: Provides methods for working with regular and especially, irregular time series problems

- glmnet: Provides methods for linear, multinomial, logistic and poisson regression models and cox model. It is based on Lasso and elastic-net regularization techniques which are used to choose most appropriate parameters or coefficients thereby eliminating co-related and redundant coefficients.

- lme4: Provides functions to fit and analyze linear mixed models, generalized linear mixed models, and nonlinear mixed models. A mixed model, in general, is defined as a statistical model containing both fixed and random effects, thus called as mixed effects. Simply speaking, say, a linear regression model depends upon a set of predictor variables (with fixed effects) and an all-includive error term which represents one or more random effects. In linear mixed models, this error term is further expanded and one or more terms having random effects are included.

- forecast: Provides methods for displaying and analysing univariate time-series models

- quantmod: A rapid prototyping environment, where quant traders can quickly and cleanly explore and build trading models. In other words, it helps in trading, building and analysing quantitative financial trading strategies.

- randomForest: Provides methods for working with classification and regression problems, based on random forests algorithm which instructs creation of large number of bootsrapped trees on random samples of variables, classifying a particular case using all of these trees in this forest, and deciding on final outcome based on average or majority voting techniques depending upon whether regression or classification problem is dealt with.

- e1071: Provides methods to work with regression and classification problems. Algorithms such as following are included as part of functions:

- Support Vector Machines (SVM)

- naïve Bayes classifier

- Bagged clustering

- Short time Fourier transform

- gam: Stands for Generalized Additive Models. Provides functions for working with generalized additive models.

- nnet: Provides methods to work with Feed-forward Neural Networks and Multinomial Log-Linear Models.

- stats: This is the base package which comes with base installation of R.

- caret: Stands for Classification And REgression Training. Provides a set of functions which could be used to do some of the following when working with classification and regression problems. Depends upon number of packages and loads these packages appropriately (on-demand) to achieve above objectives.

- Data Handling/Manipulation: Represents packages for data handling, manipulation operations.

- dplyr: One of the best tools for data manipulation, dplyr provides methods to perform different data manipulation operations with data frames and databases

- reshape2: Provides methods, melt and cast, to transform wide data format to long data formats and vice-versa. Following is further detail:- Melt: Converts wide-format data to Long-format data- Cast: Converts Long-format data to wide-format data

- sqldf: Provides SQL selects to data frames. A great resource for RDBMS professionals wanting to work with R.

- lubridate: Provides methods for date & time manipulation

- stringr: Provides methods for string manipulation. Methods include operations related with length, replace, extract, match, order etc

- XML: Provides methods for both reading and creating XML (and HTML) documents (including DTDs), both local and accessible via HTTP or FTP

- data.table: Provides functions for faster aggregation of large data sets, faster addition/update/delete of columns of data, listing columns, reading data from files

- caTools: Provides utility functions for data processing including activities such as reading/writing binary files such as GIF/ENVI, base64 encoder/decoder etc.

- outliers: Provides methods/tests for detecting outliers.

- extremevalues: Provides methods for detecting outliers in the dataset. Also comes up with GUI tool which displays the plots.

- Hmisc: Provides several functions for data analysis, utility operations, string manipulation, computing sample size and power, variable clustering etc.

- RevoScaleR: Provides methods to work with large datasets. Includes operations for reading and manipulating large data sets, cleaning them, and preparing them for statistical analysis with R

- tidyr: Provides functions to tidy the messy data. Following are three key functions:

- Gather

- separate

- Spread

- foreach: Provides looping constructs for executing R code repeatedly. The USP for foreach package is support for parallet execution of repeated operations on either multiple cores on the same system or multiple nodes in the cluster.

- sweave: Provides framework for mixing text and R code for dynamic reports generation such that the reports updated automatically if data or analysis change.

- rggobi: provides a command-line interface to GGobi, an interactive and dynamic graphics package.

- Visualization: Represent packages used for visualization.

- ggplot2: One of the best tools for data visualization, ggplot2 could be used to create plots, layer-by-layer, using data from different data sources.

- knitr: An alternative tool to Sweave, Knitr provides methods for dynamic report generation.

- igraph: A visualization tool, iGraph provides methods for working with regular and large graphs.

- manipulate: Provides interactive plotting functions for within R studio

- RColorBrewer: Provides methods for creating color palettes for thematic maps

- lattice: A high-level data visualization package with an emphasis on multivariate data. It is said to be improvement base R graphics.

- rcharts: Package to create, customize and publish javascript visualizations from R using a familiar lattice style plotting interface

- googleVis: Provides methods to interface with Google chart APIs and create interactive charts based on data frames

- colorspace: Provide methods to create and use HCL (Hue-Chroma-Luminance) packages in R

- scales: Provides methods for some of the following:

- Map data to aesthetics

- Automatically determine breaks and labels for axes and legends

- playwith: A GUI for editing and interacting with R plots

- Hadoop: Represents packages which helps to connect and process data from Hadoop ecosystem.

- RHadoop: A collection of five R packages which allow users to manage and analyze the data with Hadoop. Following are those five packages:

- rmr: functions for map reduce operations

- rhdfs: functions for file management of HDFS

- rhbase: functions for database management for HBase

- ravro: functions for reading and writing files in AVRO format

- plyrmr: functions for plyr like data processing for structured data.

- RImpala: Provides methods that connect Cloudera Impala to R thereby enabling the querying of data residing in HDFS and Apache Hbase from R that could further be procesed as an R object using R functions.

- RHadoop: A collection of five R packages which allow users to manage and analyze the data with Hadoop. Following are those five packages:

- Integration: Represents packages which achieves some of the objectives such as connecting with popular social network such as Twitter, Facebook etc.. Also, find mention of PMML package which is used for representing data mining models in XML format such that these models could be shared between different statistical software packages.

- twitteR: Provides an interface to the Twitter web API

- Rfacebook: Provides a series of functions which allow access to Facebook API, thus, getting information such as some of the following:

- Users

- Posts

- Status updates with specific keywords

- PMML: Stands for Predictive Model Markup Language. An XML-based language, PMML provides and open standard for representing data mining models. It facilitates export of predictive and descriptive models in XML format which could them be shared between different PMML-compliant applications.

- foreign: Provides functions which could be used to import data files from some of the most commonly used statistical software packages such as SAS, SPSS, Stata etc.

- Application Programming

- shiny: Helps build responsive and interactive web application using R

- slidify: Provides methods to create, customize and share HTML5 documents using R markdown

- proto: Facilitates prototype-style programming in R. Simply speaking, prototype programming is a type of object-oriented programming in which there are no classes. With proto package, one could organize data and procedures without classes.

- rJava: Low-level R to Java interface.

- GUI

- rattle: GUI for data mining. Helps do some of the following in easy manner:

- Loads data from CSV file or database

- Exploratory data analysis (transform & explore)

- Build and evaluate models

- Export models as PMML

- Rcmdr: Meant for beginners/novices, Rcmdr package provides GUI which enables access to a selection of commonly-used R commands.

- rattle: GUI for data mining. Helps do some of the following in easy manner:

- Database

- RMySQL: Provides methods to access data from MySQL database.

- RPostGreSQL: Provides methods to access data from PostGreSQL database. The package provides DBI compliant driver to access PostGreSQL database systems.

- Rmongo: Provides methods that allow access to MongoDB database.

- Rsqlite: Embeds SQLite database in R. Provides methods to work with this database.

- Miscellaneous

- digest: Provides methods to achieve some of the following objectives:

- Create hash function digests for arbitrary objects (digest)

- Create AES block cipher object (AES)

- Compute hash-based message authentication code (hmac)

- DmwR: Stands for Data Mining with R. Includes functions and data accompanying the book, “Data Mining with R, learning with case studies”

- fortunes: Contains whole set of humorous quotes and comments from different sources

- magrittr: Provides forward pipe operator for chaining commands which essentially means that the operator will forward the value into the next function.

- multicore: Provides functions for parallel execution of R code on machines with multiple cores or CPUs

- doParallel: Maintained by Revolution Analytics, doParallel provides a parallel backend for the foreach %dopar% function using the parallel package of R 2.14.0 and later.

- digest: Provides methods to achieve some of the following objectives:

Latest posts by Ajitesh Kumar (see all)

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

I found it very helpful. However the differences are not too understandable for me