What is explainable AI (XAI)? This is a question that more people are asking, as they become aware of the potential implications of artificial intelligence. Simply put, explainable AI is the form of AI that can be understood by humans. It is AI that provides an explanation for its decisions and actions. It provides humans with the ability to explain how decisions are made by machines. This helps people trust and understand what’s happening, instead of feeling like their information is being taken advantage of or used without their permission. This is important, as many people are concerned about the increasing use of AI in our lives, especially in healthcare. We need to be able to trust these systems if we are going to rely on them. In this blog post, we will discuss explainable AI concepts and give examples of how it is being used today.

What is Explainable AI and how does it work?

Explainable AI is defined as AI systems that explain the reasoning behind the prediction. Explainable AI is part of the larger umbrella term for artificial intelligence known as “interpretability.” Interpretability allows us to understand what a model is learning, the other information it has to offer, and the reasons behind its judgments in light of the real-world issue we’re attempting to address. When model metrics are insufficient, interpretability is required. Model interpretability enables us to predict how a model will perform in different test conditions by comparing it to the training environment.

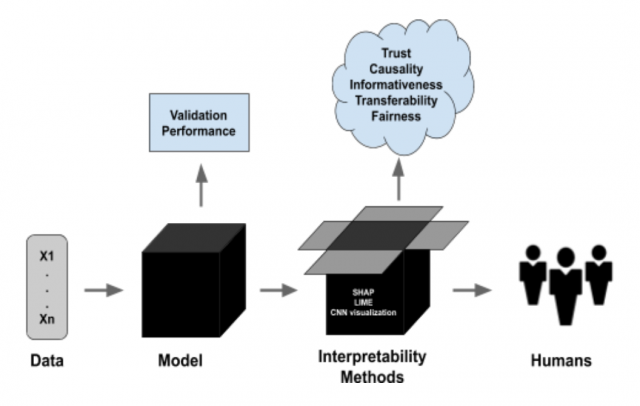

Explainable AI improves the transparency, trustworthiness, fairness, and accountability of AI systems. Explainable AI Systems can be helpful when trying to understand the reasoning behind a particular prediction or decision made by machine learning models. The picture below represents the workflow where explainability fits in.

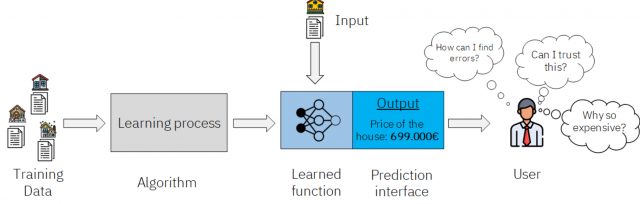

This form of AI has become important as a larger number of stakeholders have started questioning the predictions made by AI. They want to know how the predictions have been made before they rely on the predictions and make decisions. The picture below represents the need for explainable AI.

Explainable AI Systems could also be helpful for situations involving accountability, such as with autonomous vehicles; if something goes wrong with explainable AI, human is still accountable for their actions. The explainable AI models are trained using concepts from explainability techniques, which use human-readable textual descriptions to explain the reasoning behind a model’s prediction. Today, explainability techniques are used in many different areas of artificial intelligence such as natural language processing (NLP), computer vision, medical imaging, health informatics, and others. Some of the universities where explainable AI research is being carried out are MIT, Carnegie Mellon University, and Harvard.

The key difference between AI and explainable AI is that explainable AI is a form of artificial intelligence that has explanations for its decisions. The explainability techniques used in explainable AI are heavily influenced by how humans make inferences and form conclusions, which allows them to be replicated within an explainable artificial intelligence system.

Explainable AI can help explain the reasoning behind a machine learning model’s decision trained using any of the algorithms such as linear regression, random forest, decision trees, etc. This is one of the most common explainability techniques used in practice and there are many tools out there that provide this functionality. For example, SHapley Additive exPlanations (SHAP) and LIME are explainability tools that allow you to explain the decisions made by a machine learning model using local interpretations. Here is a brief introduction to how these tools work:

- LIME: LIME stands for Local Interpretable Model-Agnostic Explanations. LIME is an open-source explainer developed by researchers at Carnegie Mellon University. It can be used to explain the predictions of any machine learning model and has been used to explain everything from credit scoring models to self-driving cars. LIME works by perturbing the input data and observing how this affects the output of the model. Given an example x, LIME tries to fit a locally interpretable model that is consistent with the output of the original model, f(x), in a neighborhood surrounding x.

- SHAP (SHapley Additive exPlanations): SHAP framework is based on game theory and specifically the Shapley values from cooperative game theory. It combines optimal credit allocation with local explanations using the original Shapley values from game theory and their descendants.

Explainable Artificial Intelligence Systems can be built using different techniques that focus on reasoning through the explanation of data or prediction by explaining decisions made by an artificial intelligence algorithm. Some common explainability techniques include:

- Decomposition (breaking down a model into individual components)

- Visualization (building explainability features to explain model predictions in a visual way)

- Explanation Mining (using machine learning techniques for finding relevant data that explains the prediction of an artificial intelligence algorithm).

Benefits & Challenges of Explainable AI

Explainable AI forms a key aspect of ethical AI. Here are the key benefits of using explainable AI:

- Explainable AI is desired in use cases involving accountability. For example, explainable AI could help create autonomous vehicles that are able to explain their decisions in the case of an accident.

- Explainable AI is critical for situations involving fairness and transparency where there are scenarios with sensitive information or data associated with it (i.e., healthcare)

- Enhanced trust between humans and machines

- Higher visibility into model decision-making process (which helps with transparency)

Here are some challenges related to explainable AI:

- Explainable AI is a relatively new area of research and there are still many active challenges that explainable models present today. One challenge is explainability can be at the expense of model performance accuracy, since explainable artificial intelligence systems tend to have lower performance compared to non-explainable models or black-box models.

- One of the key challenges in Explainable AI is how to generate explanations that are both accurate and understandable.

- Another key challenge with explainable artificial intelligence is explainable AI models can be more difficult to train and tune compared to non-explainable machine learning models.

- Another challenge is explainable AI systems can be more difficult to deploy since explainability features typically need some level of human-in-the-loop intervention.

Why is Explainable AI important for the future?

AI has its downsides such as bias and unfairness. These are going to create trust issues with AI in time to come. Explainability and explainable AI is a way of mitigating these challenges. This is where explainability techniques are gaining momentum fast since they will likely lead to better human-machine interaction, more responsible technologies (such as autonomous vehicles) and increased trust between machines and humans. Explaining decisions made by artificial intelligence systems can help provide transparency on how the model arrives at its decision. For example, explainable AI could be used to explain an autonomous vehicles reasoning on why it decided not to stop or slow down before hitting a pedestrian crossing the street.

Explainable AI is an important part of future of AI because explainable artificial intelligence models explain the reasoning behind their decisions. This provides an increased level of understanding between humans and machines, which can help build trust in AI systems.

Examples of explainable AI in action

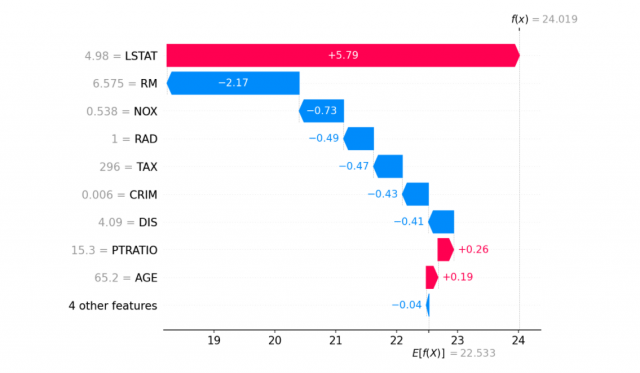

Here is an example of explainability of model prediction where the Boston housing price prediction model got trained using the XGBoost algorithm. The explainability is achieved using SHAP. The explainability shown below represents features each contributing to pushing the model output from the base value (the average model output over the training dataset we passed) to the model output. Features pushing the prediction higher are shown in red, those pushing the prediction lower are in blue. Another way to visualize the same explanation is to use a force plot (these are introduced in our Nature BME paper):

Here are some use cases where explainable AI can be used:

- Healthcare: When diagnosing patients with the disease, explainable AI can explain their diagnosis. It can help doctors explain their diagnosis to patients and explain how a treatment plan is going to help. This will help create greater trust between patients and their doctors while mitigating any potential ethical issues. One of the examples where AI predictions can explain their decisions might involve diagnosing patients with pneumonia. Another example where explainable AI can be extremely useful is in healthcare with medical imaging data for diagnosing cancer.

- Manufacturing: Explainable AI could be used to explain why an assembly line is not working properly and how it needs adjustment over time. This is important for improved machine-to-machine communication and understanding, which will help create greater situational awareness between humans and machines.

- Defense: Explainable AI can be useful for military training applications to explain the reasoning behind a decision made by an artificial intelligence system (i.e., autonomous vehicles). This is important because it helps mitigate potential ethical challenges such as why it misidentified an object or did not fire on a target.

- Autonomous vehicles: Explainable AI is becoming increasingly important in the automotive industry due to highly publicized events involving accidents caused by autonomous vehicles (such as Uber’s fatal crash with a pedestrian). This has placed an emphasis on explainability techniques for AI algorithms, especially when it comes to using cases that involve safety-critical decisions. Explainable AI can be used for autonomous vehicles where explainability provides increased situational awareness in accidents or unexpected situations, which could lead to more responsible technology operation (i.e., preventing crashes).

- Loan approvals: explainable artificial intelligence can be used to explain why a loan was approved or denied. This is important because it helps mitigate any potential ethical challenges by providing an increased level of understanding between humans and machines, which will help create greater trust in AI systems.

- Resume screening: explainable artificial intelligence could be used to explain why a resume was selected or not. This provides an increased level of understanding between humans and machines, which helps create greater trust in AI systems while mitigating issues related to bias and unfairness.

- Fraud detection: Explainable AI is important for fraud detection in financial services. This can be used to explain why a transaction was flagged as suspicious or legitimate, which helps mitigate potential ethical challenges associated with unfair bias and discrimination issues when it comes to identifying fraudulent transactions.

Some interview questions on Explainable AI

Here are some interview questions you would like to be aware of, when going for AI/machine learning interviews:

- What is explainable AI? What is explainability in artificial intelligence?

- What is some explainable AI use cases?

- How can explainable AI be used for fraud detection?

- Why would someone want to explain the reasoning behind an autonomous vehicle’s decision-making process?

- Why would a company need explainable Artificial Intelligence solutions at all times, not just during reviews and audits?

- Why is explainable artificial intelligence important for trust? What ethical issues does explainable artificial intelligence address?

- What are some frameworks for explainable artificial intelligence?

- What are some explainable AI examples which involve medical imaging data for diagnosing cancer?

- Name at least three explainable artificial intelligence use cases.

If explainability is very important for your business decisions, explainable AI should be a key consideration in your analytics strategy. With explainable AI, you can provide transparency on how decisions are made by AI systems and help build trust between humans and machines. The use cases where explainable AI has been used include healthcare (diagnoses), manufacturing (assembly lines), and defense (military training). If these sound like areas of interest to you or if this content piqued your curiosity about explainable AI in general, we’d love to hear from you! We have experience consulting with organizations interested in incorporating explainability into their products/services – let us know what’s keeping you up at night so that we can offer our expertise to create an explainable artificial intelligence solution that’s right for your organization.

- Mathematics Topics for Machine Learning Beginners - July 6, 2025

- Questions to Ask When Thinking Like a Product Leader - July 3, 2025

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

[…] Kumar, A. (2022, March 28). What is Explainable AI? Concepts & Examples – Data Analytics. Data Analytics. https://vitalflux.com/what-is-explainable-ai-concepts-examples/#Benefits_Challenges_of_Explainable_A… […]