Have you ever wondered how AI models like OpenAI GPT-3 (Generative Pretrained Transformers-3) can generate impressively human-like text? Enter the realm of in-context learning that gives GPT-3 its conversational abilities and makes it extraordinary. In this blog, we’re going to learn the concepts of in-context learning, its different forms, and how GPT-3 uses it to revolutionize the way we interact with AI.

What’s In-context Learning?

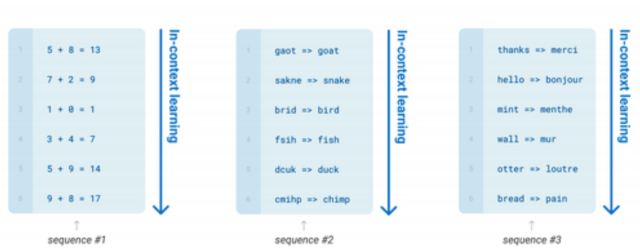

In-context learning is at the heart of these large language models (LLMs), enabling GPT models to understand/comprehend and create text that closely resembles human speech, based on the instructions and examples they’re provided. As the model learns about the context based on the examples provided to it, it is called in-context learning. It’s like teaching students a new subject – you give them guidelines and examples, and they learn from this context to generate the correct answers. Now, imagine a machine doing the same. That’s exactly what OpenAI GPT-3, a groundbreaking AI model, does. Through in-context learning, it not only understands prompts but also generates apt responses.

Here is an example of in-context learning. You can find details in this book in the chapter, Text Generation: Natural Language Processing with Transformers.

There are three approaches to in-context learning. They are as follows:

- Few-shot

- One-shot

- Zero-shot

Few-shot Learning: Concept & Example

Few-shot learning is an approach in which a user includes several examples in the prompt to demonstrate the expected answer format and content. These examples provide a few instances of the task at hand, allowing the model to learn from them and make predictions based on that limited exposure. The number of examples can vary but typically ranges from 0 to 100, depending on the maximum input length allowed for a single prompt.

Let’s consider an example to understand few-shot learning better. Suppose you have a language model trained on various topics and you want to use it to generate descriptions for different animals. In a few-shot learning scenario, you would provide a prompt like:

1. Lion:

Description: The lion is a large carnivorous mammal known for its majestic mane and strong physique. It is often referred to as the king of the jungle.

2. Dolphin:

Description: The dolphin is a highly intelligent marine mammal known for its playful nature and acrobatic skills. It is often found in oceans and seas.

3. Elephant:

Description: The elephant is a massive herbivorous mammal with a long trunk and large, curved tusks. It is known for its incredible strength and gentle nature.

Now, generate descriptions for the following animals:

4. Penguin:

5. Giraffe:

6. Cheetah:

In this example, we provided descriptions of the lion, dolphin, and elephant as examples. The model can learn from these examples and understand the expected format and content of the descriptions. Based on this few-shot learning, we asked the model to generate descriptions for the penguin, giraffe, and cheetah. The model utilized the knowledge it gained from the provided examples to generate accurate descriptions for these animals, even though it has not been fine-tuned specifically for this task.

By using a few-shot learning approach, we can achieve meaningful results with relatively few examples, reducing the amount of task-specific data required for accurate predictions. However, it’s important to note that few-shot learning may not perform as accurately as a model that has been fine-tuned specifically for the task at hand.

Here are a few real-world examples where prompts based on few-shot learning can be helpful.

- Customer Support Chatbots: Chatbots are commonly used in customer support to provide instant assistance. With few-shot learning, chatbots can quickly adapt to new customer queries or specific issues by providing a few examples of the expected user inputs and the corresponding desired responses. This enables the chatbot to understand the context and generate appropriate replies without requiring extensive training data.

- Content Generation: In creative fields such as writing, design, or music, few-shot learning can aid in generating new content. For instance, an AI-based writing assistant can be trained with a few example paragraphs or styles, enabling it to generate text in a similar tone or genre. This allows writers, artists, or musicians to quickly explore different styles or themes without investing significant time and resources in training models from scratch.

One-shot Learning: Concept & Example

One-shot learning is an approach in which only a single example is provided in the prompt to demonstrate the desired task. Unlike few-shot learning, which involves multiple examples, one-shot learning relies on a single instance to train the model and make predictions based on that sole example.

To understand one-shot learning better, let’s consider an example of the following prompt.

Answer the following question based on the provided information:

The city of Paris is known for its iconic landmark, the Eiffel Tower. It is a wrought-iron lattice tower located in the Champ de Mars park.

What is the famous landmark in Paris?

The output will be the following:

The famous landmark in Paris is the Eiffel Tower.

It’s important to note that in one-shot learning, the model relies on the initial example and the context given. Its ability to answer subsequent questions correctly is based on its understanding and generalization capabilities using that single example during training.

Zero-shot Learning: Concept & Example

Zero-shot learning is an approach in which no examples are provided to the model during the generation call. Instead, only the task or request is given as input. The model is expected to generate a response based solely on the understanding of the task, without any specific training on examples.

Here is an example of a prompt representing zero-shot learning for GPT model. Note that no context or example is provided. The prompt is a direct question and GPT-3 model is expected to answer based on its knowledge:

Who is known as the "Father of the Theory of Relativity"?

The following will be the response from ChatGPT:

Albert Einstein is known as the “Father of the Theory of Relativity.”

Zero-shot learning allows the model to make predictions based on its pre-existing knowledge and understanding of the given task. It enables the model to provide responses without being fine-tuned on specific examples. However, it’s important to note that the accuracy of zero-shot learning can vary depending on the model’s pre-training and the complexity of the task.

Conclusion

In-context learning is a powerful technique employed by GPT models to perform a variety of tasks. It allows models to understand and generate responses based on the natural language instructions and examples provided in the prompt. Whether it is few-shot learning, one-shot learning, or zero-shot learning, each approach offers unique advantages and applications.

Prompts based on few-shot learning enable the model to learn from a small number of examples, reducing the need for extensive task-specific training data. One-shot learning prompts rely on just a single example to train the model. Although more challenging, it proves useful in situations where obtaining multiple examples is difficult or time-consuming. Zero-shot learning prompts require GPT-3 models relying solely on the task or request without specific examples. It showcases the model’s ability to leverage its pre-existing knowledge and understanding to generate responses. While convenient, the accuracy of zero-shot learning depends on the model’s pre-training and the complexity of the task.

- The Watermelon Effect: When Green Metrics Lie - January 25, 2026

- Coefficient of Variation in Regression Modelling: Example - November 9, 2025

- Chunking Strategies for RAG with Examples - November 2, 2025

I found it very helpful. However the differences are not too understandable for me