This post is aimed to help you learn different types of machine learning algorithms which forms the key to artificial intelligence (AI).

- Machine learning algorithms

- Representation or Feature learning algorithms

- Deep learning algorithms

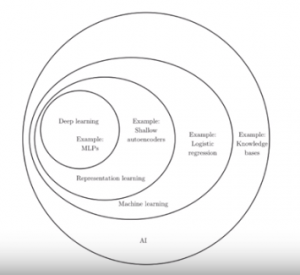

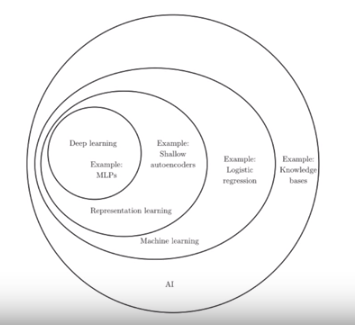

The following represents different types of learning algorithms in form of a Venn diagram.

Figure 1. Types of machine learning (AI) (Courtesy: Deep Learning Book by Ian Goodfellow)

What are Machine Learning (ML) Algorithms?

Machine learning algorithms are the most simplistic class of algorithms when talking about AI. ML algorithms are based on the idea that external entities such as business analysts and data scientists need to work together to identify the features set for building the model. The ML algorithms are, then, trained to come up with coefficients for each of the features and how are they mapped with the actual outcome. Mathematically speaking, ML algorithms can be represented in following ways:

y = f(x1, x2, ...,Xn, c)

In above equation, y is the output expected to be calculated as a result of execution of the algorithm. X1, X2, …Xn is the features and C is a constant. In its simple form, the above can also be represented as follows:

y = a1.x1 + a2.x2 + a12.x1.x2 + a3.x3 + c

In above example, x1, x2, (x1.x2) and x3 are the features and a1, a2, a12 and a3 are the coefficients respectively.

Let’s take an example to understand the above.

Let’s say the problem in hand is to predict whether a person is suffering from disease A based on blood tests. The doctors would advise different parameters/criteria (features) which can be used to calculate the likelihood of whether a person is suffering from the disease A. Let’s say, we can use Logistic Regression to predict the class based on likelihood estimate ranging from 0 to 1.

The data related to features will be extracted/taken from the database and used to train the logistic regression model. As a result of training, the coefficients for each of the features will be calculated.

ML algorithms can further be classified into the following different types:

- Supervised learning (regression, classification)

- Unsupervised learning

The following are some of the examples of machine learning algorithms requiring features to be predetermined:

- Linear regression (Simple, multi-linear)

- Logistic regression

- Decision trees

- Random forest

- SVM

What is Representation/Feature Learning Algorithms?

Representation learning also termed as features learning, represents a class of machine learning algorithms which are used to extract features from the data fed into the system. This is unlike the simplistic ML algorithms which require the features to be provided upfront and calculates the value of coefficients associated with each feature with help of which, maps features to the outcome.

Once features are calculated/extracted, the features are then mapped to the outcome using the coefficients.

For example, ML learning can be used to extract features such as edges of an object based on the data such as pixels brightness fed into it.

ML algorithm, AutoEncoder is a classic example representation learning algorithm which tries to learn aspects (such as edges) about the image from pixels data and try to redraw the image.

What is Deep Learning Algorithms?

Deep learning algorithms take the representation or feature learning a set further. Deep learning algorithms use multiple layers of feature learning to extract different features set at each layer and predict/recognize/classify the objects.

The following are some of the examples of deep learning algorithms:

- Deep feedforward networks

- Convolutional neural network (CNN)

- Recurrent neural network

Let’s understand using an example of predicting whether an image is a cat.

Millions of images can be fed into deep learning network. Each layer of this network would result in deriving simplistic as well as abstract features. For example, pixels are used to extract the feature, edges. Edges are used to arrive at feature such as corners. Edges and corners are used to arrive at “object”. Finally, features are mapped to the outcome.

References

- Three Approaches to Creating AI Agents: Code Examples - June 27, 2025

- What is Embodied AI? Explained with Examples - May 11, 2025

- Retrieval Augmented Generation (RAG) & LLM: Examples - February 15, 2025

I found it very helpful. However the differences are not too understandable for me