In this post, you will learn about how to train an SVM Classifier using Scikit Learn or SKLearn implementation with the help of code examples/samples.

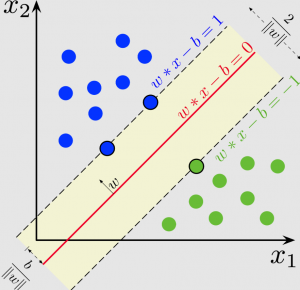

An SVM classifier, or support vector machine classifier, is a type of machine learning algorithm that can be used to analyze and classify data. A support vector machine is a supervised machine learning algorithm that can be used for both classification and regression tasks. The Support vector machine classifier works by finding the hyperplane that maximizes the margin between the two classes. The Support vector machine algorithm is also known as a max-margin classifier. Support vector machine is a powerful tool for machine learning and has been widely used in many tasks such as hand-written digit recognition, facial expression recognition, and text classification. Support vector machine has many advantages over other machine learning algorithms, such as robustness to noise and the ability to handle large datasets.

SVM can be used to solve non-linear problems by using kernel functions. For example, the popular RBF (radial basis function) kernel can be used to map data points into a higher dimensional space so that they become linearly separable. Once the data points are mapped, SVM will find the optimal hyperplane in this new space that can separate the data points into two classes.

Scikit Learn offers different implementations such as the following to train an SVM classifier.

- LIBSVM: LIBSVM is a C/C++ library specialised for SVM. The SVC class is the LIBSVM implementation and can be used to train the SVM classifier (hard/soft margin classifier). LIBSVM was developed by Chang and Lin and is released under the BSD license. The library is implemented in C++ and is also available for R, Perl, Pascal, Java, and other programming languages. LIBSVM provides an efficient implementation of the SVM classifier. The library is easy to use and can be applied to a variety of data sets.

- Native Python implementation: Scikit Learn provides python implementation of SVM classifier in form of SGDClassifier. SGDClassifier makes use of stochastic gradient descent, which means that it updates the weights of the SVM model after each training instance. This allows SGDClassifier to more quickly converge on a solution than traditional SVM implementations. Sklearn is a popular machine learning library for Python that includes various SVM implementations, including SGDClassifier.

LIBSVM SVC Code Example

In this section, the code below makes use of SVC class (from sklearn.svm import SVC) for fitting a model. SVC, or Support Vector Classifier, is a supervised machine learning algorithm typically used for classification tasks. SVC works by mapping data points to a high-dimensional space and then finding the optimal hyperplane that divides the data into two classes. Sklearn SVC is the implementation of SVC provided by the popular machine learning library Scikit-learn.

import pandas as pd

import numpy as np

from sklearn.svm import SVC

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

from sklearn import datasets

# IRIS Data Set

iris = datasets.load_iris()

X = iris.data

y = iris.target

# Creating training and test split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=1, stratify = y)

# Feature Scaling

sc = StandardScaler()

sc.fit(X_train)

X_train_std = sc.transform(X_train)

X_test_std = sc.transform(X_test)

# Training a SVM classifier using SVC class

svm = SVC(kernel= 'linear', random_state=1, C=0.1)

svm.fit(X_train_std, y_train)

# Mode performance

y_pred = svm.predict(X_test_std)

print('Accuracy: %.3f' % accuracy_score(y_test, y_pred))

SVM Python Implementation Code Example

In this section, you will see the usage of SGDClassifier (Note from sklearn.linear_model import SGDClassifier)which is a native python implementation. The code below represents the implementation with default parameters.

from sklearn.linear_model import SGDClassifier

# Instantiate SVM classifier using SGDClassifier

svm = SGDClassifier(loss='hinge')

# Fit the model

svm.fit(X_train_std, y_train)

# Model Performance

y_pred = svm.predict(X_test_std)

print('Accuracy: %.3f' % accuracy_score(y_test, y_pred))

- The Watermelon Effect: When Green Metrics Lie - January 25, 2026

- Coefficient of Variation in Regression Modelling: Example - November 9, 2025

- Chunking Strategies for RAG with Examples - November 2, 2025

it was fantastic, great and educative