Sequence to sequence (Seq2Seq) modeling is a powerful machine learning technique that has revolutionized the way we do natural language processing (NLP). It allows us to process input sequences of varying lengths and produce output sequences of varying lengths, making it particularly useful for tasks such as language translation, speech recognition, and chatbot development. Sequence to sequence modeling also provides a great foundation for creating text summarizers, question answering systems, sentiment analysis systems, and more. With its wide range of applications, learning about sequence to sequence modeling concepts is essential for anyone who wants to work in the field of natural language processing. This blog post will discuss types of sequence models, their examples, and how they can be used to help with the understanding and analysis of sequences.

What is sequence data?

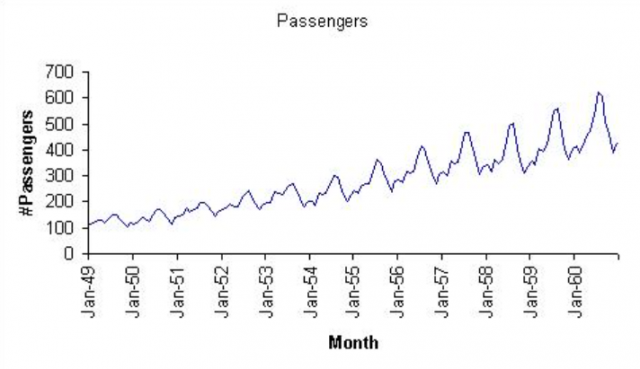

Sequence data are the data points which are ordered in the meaningful manner such that earlier data points or observations provide the information about later data points or observations and vice versa. The example of sequence data includes time-series data, data related to natural language processing, etc. The time series data is a sequence data which can be defined as a sequence of observations where each observation is dependent on the previous one. Sequence data can be represented as observations of one or more characteristics of events over time. Here is the example of how sequence data looks like:

Lets take a look at some of the example of sequence data points.

- Is the output of flipping a coin a sequence data? Well, if the coin is fair, the output of coin flips is not a sequence data. However, if the coin is defective, the output can become sequence data.

- Is the text appearing in a sentence, a sequence data? Yes, the text which appears in a sentence is sequence data.

- Is the movie a sequence data? Yes, the sequence of frames in a movie is an example of sequence data. The CNN can be used to extract the features from each frame (image) and passed to the sequence models for modeling purpose.

How is Sequence Data processed in the Neural Network?

Let’s see an example of sequence data from natural language processing and how are neural networks such as RNN (recurrent neural network) trained with it.

Let’s take a sentence – Climate change refers to long-term shifts in temperatures and weather patterns. This is a a sequence of words that convey meaning in a particular order. In NLP, such sequences of words are often referred to as “sequences” or “sequences of tokens“.

To train a neural network such as recurrent neural network (RNN) or long short term memory (LSTM) or transformer network, we need to convert the text data into a numerical representation and feed these embeddings in the network sequentially. One way to do this is to use word embeddings, which are numerical representations of words that capture their meaning and context in a language model. Each word in the text data is converted as a dense vector of fixed size, where each dimension of the vector corresponds to a particular aspect of the word’s meaning. Thus, in the above sentence (Climate change…), each word is converted into N-dimension vector where each dimension represents some aspect of the word.

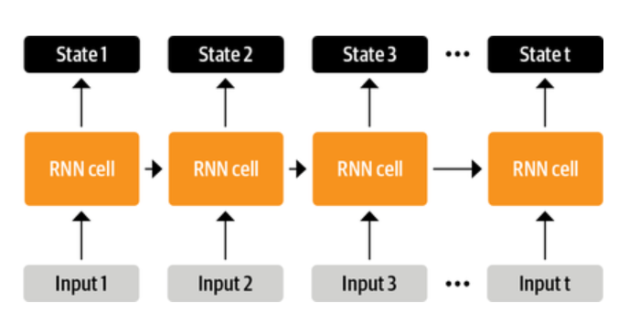

Each of these word embeddings is fed input to the network, one word (n-dimension vector) at a time, in sequence. At each time step, the network processes the current input word (n-dimension vector) and the previous hidden state to generate a new hidden state and an output. The hidden state at each time step captures the context of the current word in the sentence, based on the previous words in the sequence. The picture below represents the same. RNN cell represents the network. The input is fed one by one. And, from second input onwards, the hidden state and the next input is fed. The output is hidden state fed back into network and an output state.

After training the neural network such as RNN / LSTM / transformer, etc. on a large corpus of text data, the network can be used to perform various natural language processing tasks, such as text generation, sentiment analysis, and language translation. For example, given a sequence of words as input, an appropriate Seq2Seq network can generate a new sequence of words that follow a similar pattern or convey a similar meaning.

Types of sequence models

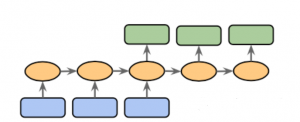

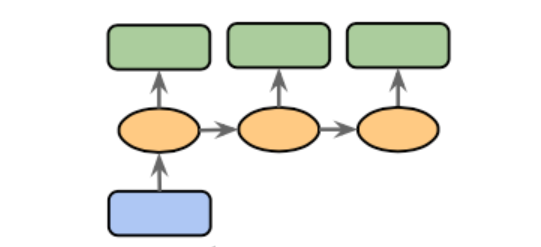

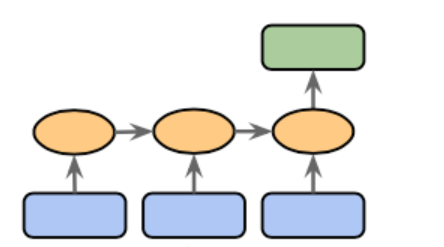

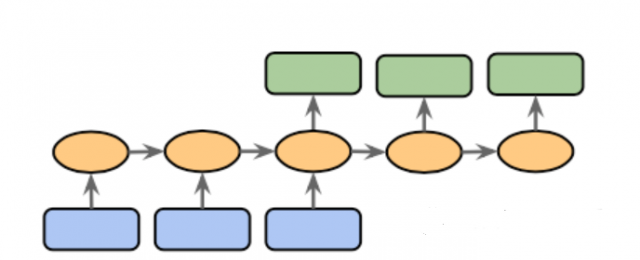

There are various different types of sequence models based on whether the input and output to the model is sequence data or non-sequence data. They are as following:

- One-to-sequence (One-to-many): In one-to-sequence model, the input data is non sequence and the output data is sequence data. Here is how one-sequence model looks like. One classic example is image captioning where input is one single image and the output is a sequence of words.

- Sequence-to-one (Many-to-one): In sequence-to-one sequence model, the input data is sequence and output data is non sequence. For example, consider a sequence of words (sentence) fed into the network and the output is positive or negative sentiment. This is also called sentiment analysis.

- Sequence-to-sequence (Many-to-many): In sequence-to-sequence sequence model, the input data is sequence and output data is sequence. Take an example of machine translation system. Input is a sequence of words (sentence) in one language and output is another sequence of words in another language.

Examples of sequence models

Here are some examples where different types of sequence models are used.

- One-to-sequence sequence model: Image captioning can be modeled using one-to-sequence model.

- Sequence-to-one sequence models: Smart reply as in a chat tools can be modeled using sequence-to-one model. The input data becomes the sequence of text and output is different types of replies or responses. The point to note is that at times the output may look like sequence data but one can model using sequence to one model. In predicting movie rating based on sequence of user feedback is an example of sequence to one sequence model. The input data in this case is sequence and the output data is non sequence (the rating).

- Sequence-to-sequence sequence model: In language translation, the sequence-to-sequence model is used. The input data in this case is sequence of text in the natural language and the output data is also sequence of text in the natural language. The image captioning can also be modeled using sequence-to-sequence model. However, one-to-sequence model can also be used for modeling image captioning.

Sequence Models are a sequence modeling technique that is used for analyzing sequence data. There are three types of sequence models: one-to-sequence, sequence-to-one and sequence to sequence. Sequence models can be used in different applications such as image captioning, smart replies on chat tools and predicting movie ratings based on user feedback (just to name a few). If you would like to learn more about sequence models, please drop a message and we will respond to your queries.

- The Watermelon Effect: When Green Metrics Lie - January 25, 2026

- Coefficient of Variation in Regression Modelling: Example - November 9, 2025

- Chunking Strategies for RAG with Examples - November 2, 2025

[…] out this blog (What are sequence models? Types & examples) to get an understanding of sequence data and the different types of sequence models which can be […]